Introduction

In STEM applications for Oxbridge and the G5, the pathways of Oxford Physics and Cambridge Natural Sciences admissions have always attracted the world’s finest scientific minds. Students choosing this route usually hold straight A*s and a standard portfolio of medals from top Olympiads like the BPhO and UKChO. Their solid academic foundations give them the confidence to handle intense pressure and rigorous challenges.

However, with premier universities such as Oxford, Cambridge, and Imperial College London fully adopting the ESAT, a new pain point has emerged: When top contenders across engineering, physics, and natural sciences are evaluated using the exact same “yardstick” for admissions, many applicants lack an objective reference frame to determine what score guarantees a secure spot within their specific subject pool.

Without precise positioning, even the strongest candidates can easily suffer from strategic misjudgements in their preparation pace. Combining the latest official Oxbridge admissions macro-data and the ESAT report released by UAT-UK, this article aims to establish an objective benchmark for students striving to reach the pinnacle of science. By clarifying the true scales of selection, we will help you find your exact bearings, enabling you to give it your all with absolute confidence in the upcoming final sprint.

I. The Reality of the Admissions Funnel: Understanding Your Subject Competition Pool

Although the ESAT is a massive standardised test, Oxford and Cambridge still conduct independent screening strictly based on specific courses when issuing interview invitations and final offers. Therefore, before obsessing over admissions test scores, we must first understand the true competitive landscape of our chosen subjects from a historical macro-perspective.

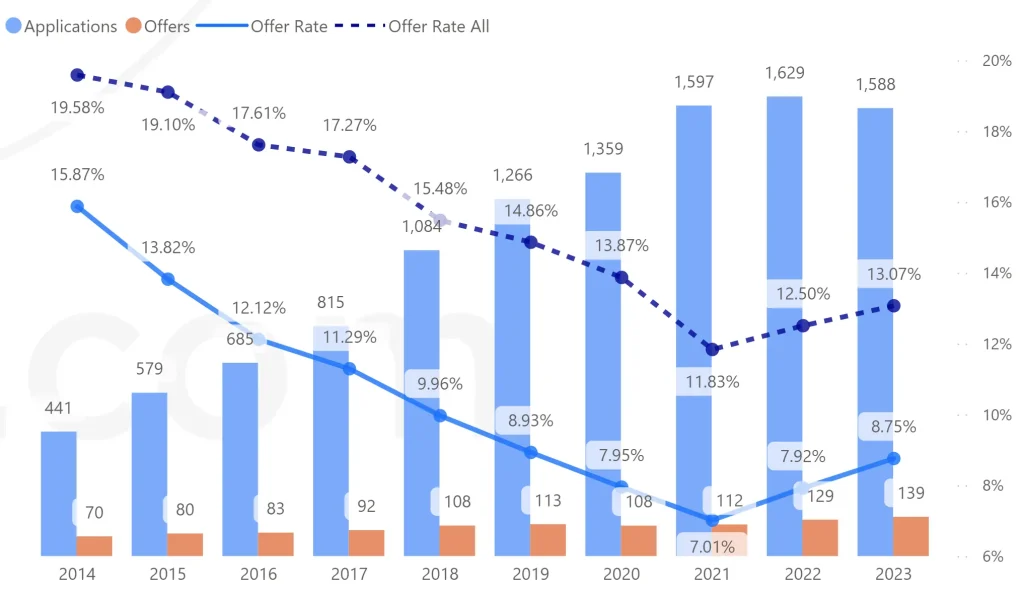

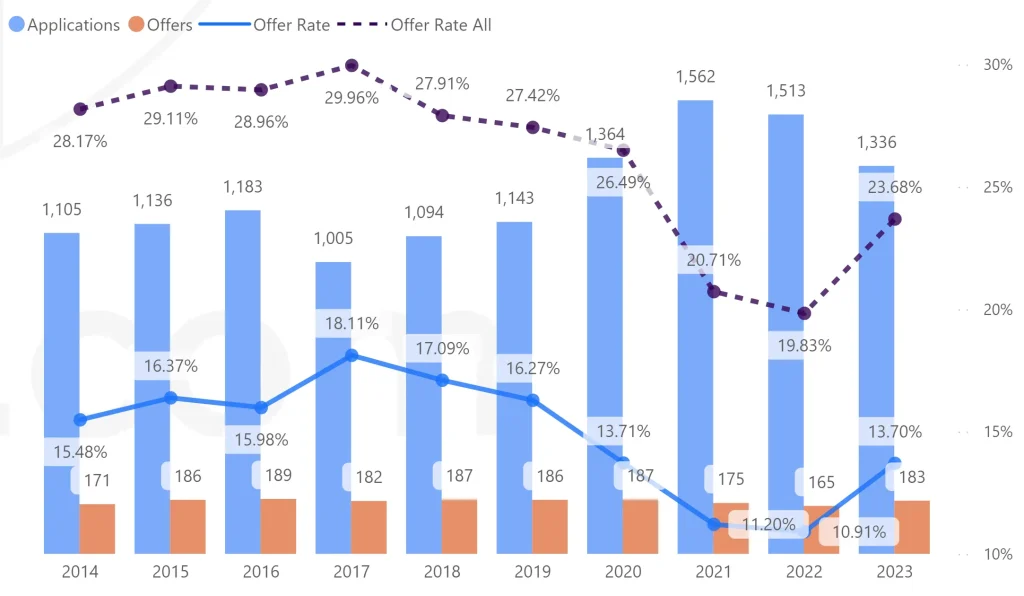

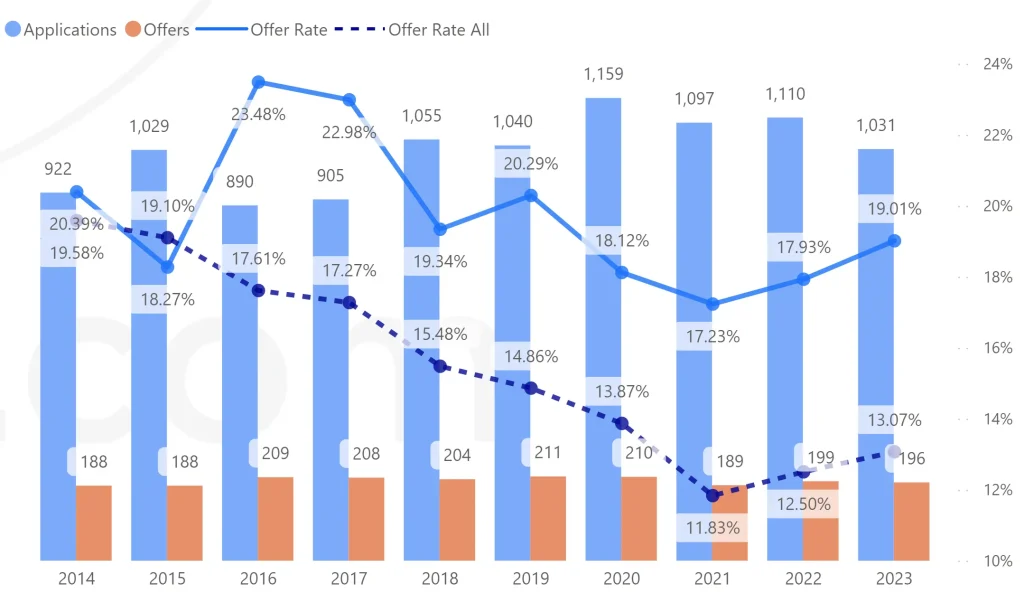

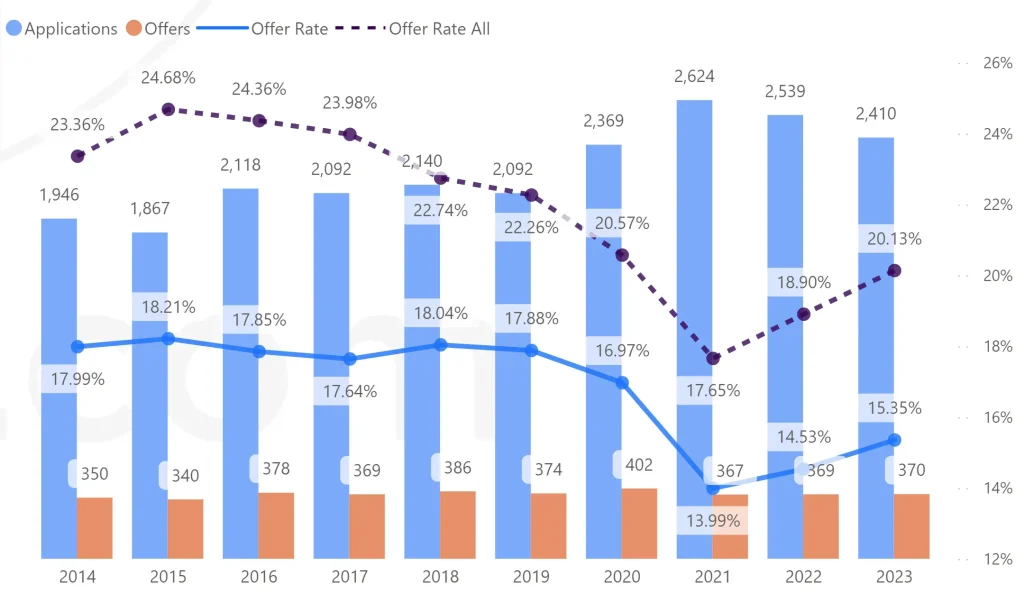

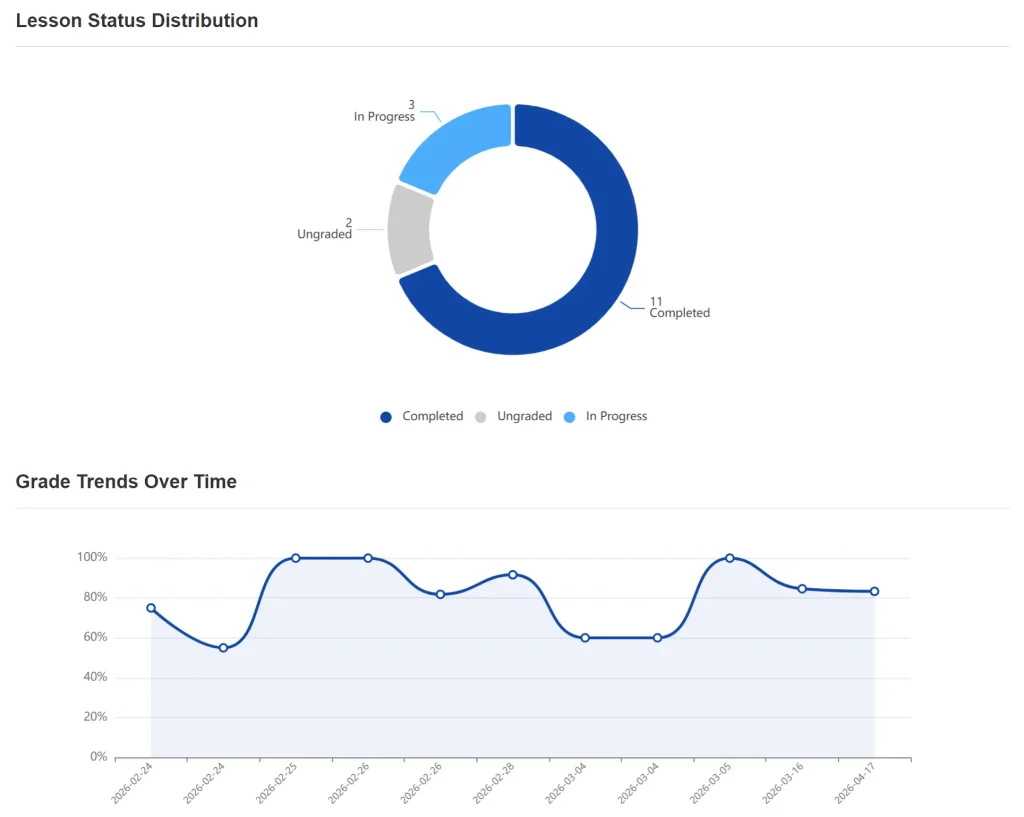

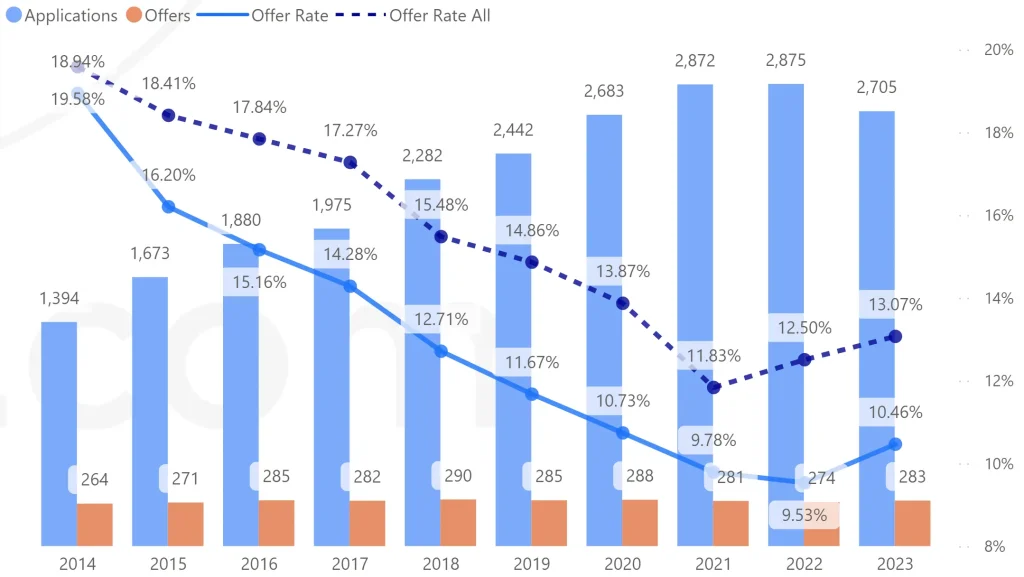

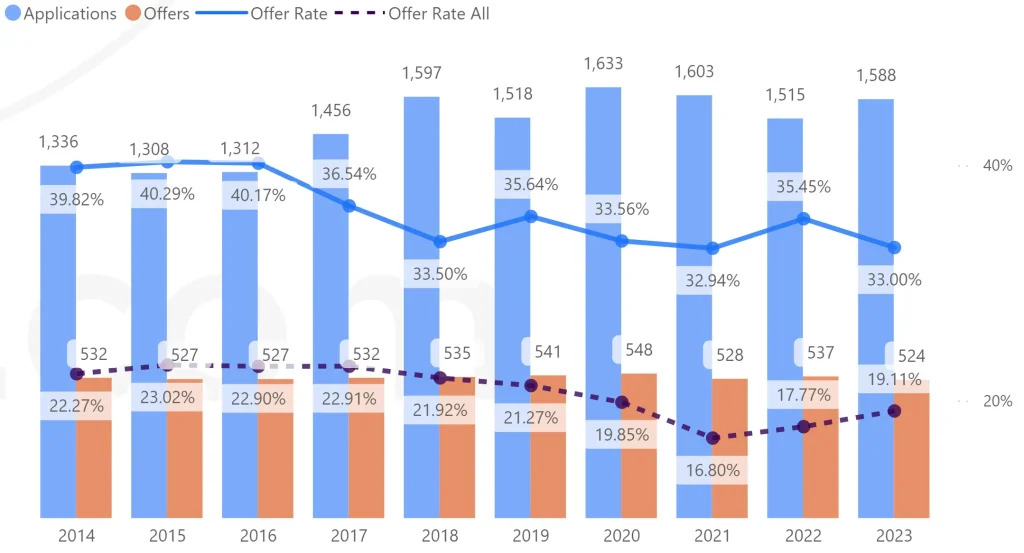

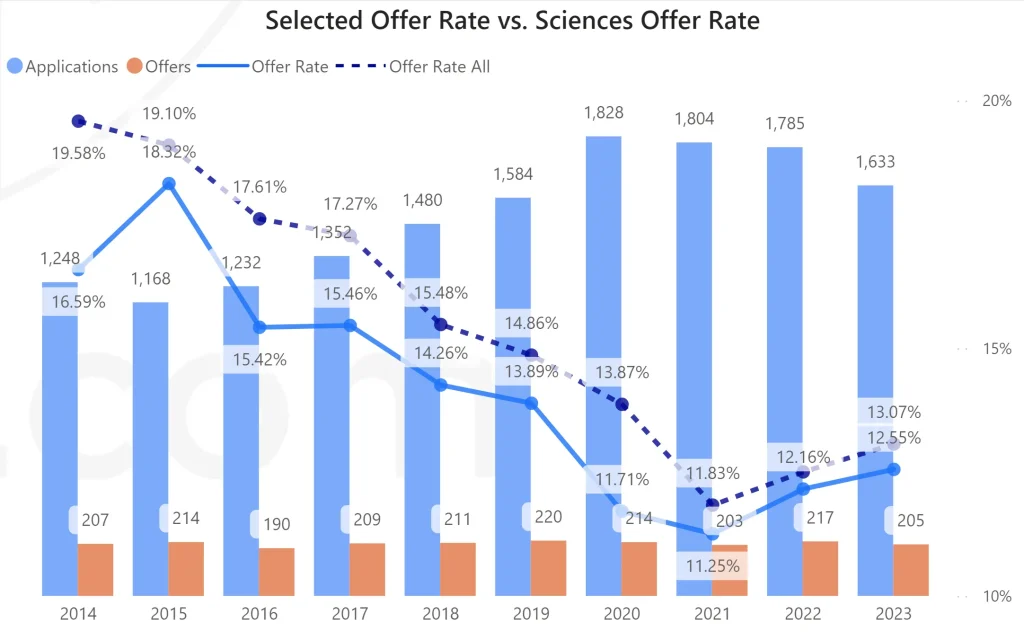

The following two charts show the admissions trends for Oxford Physics and Cambridge Natural Sciences, compiled by UEIE based on official data over the past decade (2014–2023):

Physics Admissions Data at Oxford during 2014–2023 Application Cycles

(Plotted by UEIE based on official data)

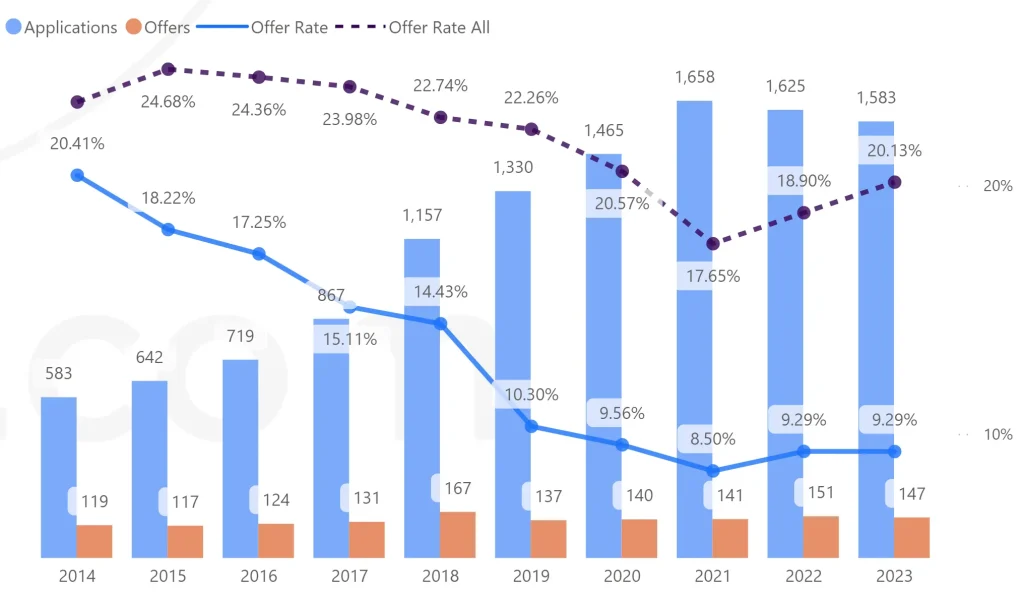

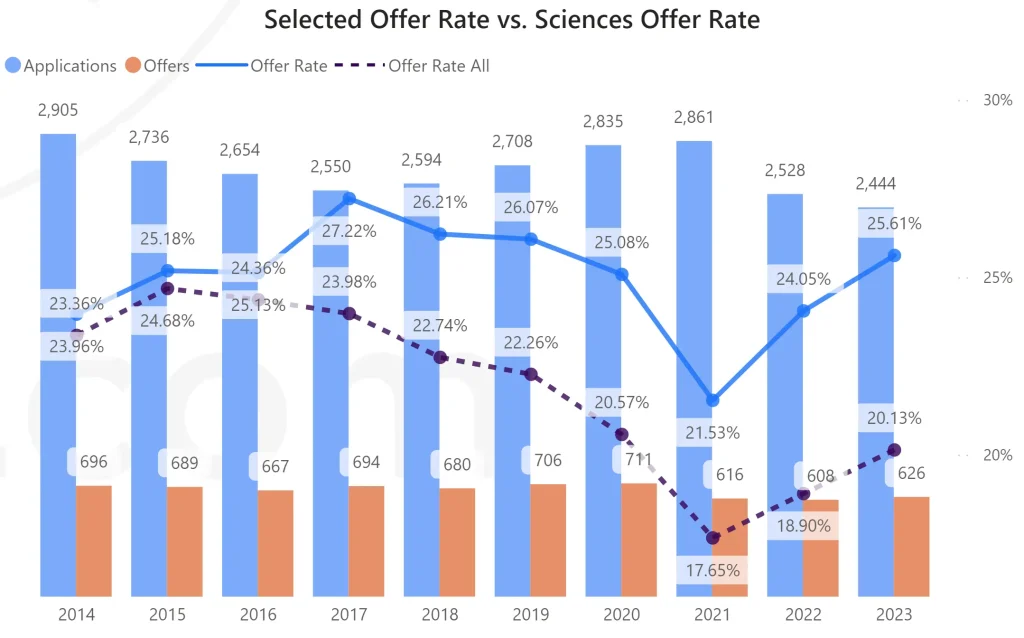

Natural Sciences Admissions Data at Cambridge during 2014–2023 Application Cycles

(Plotted by UEIE based on official data)

These charts clearly reveal a continuous tightening of thresholds for pure science courses:

| Oxford Physics | Over the past decade, application numbers for Oxford Physics have remained consistently high, but the offer rate has vibrated downwards from 18.32% in 2015 to just 13.07% in 2023. The overall shortlisting rate stands at a mere 33.32%. |

|---|---|

| Cambridge Natural Sciences | As one of Cambridge’s largest courses, it has accumulated over 26,000 applications in the past ten years. Under the pressure of such a massive volume, the university must maintain exceptionally high academic standards to sustain its overall acceptance rate of around 25%. |

To give you a more intuitive sense of how these standards operate during the actual admissions process, I have built the dynamic chart below, “Comparison of Oxford Physics and Cambridge Natural Sciences Admissions Funnels”, based on the underlying data from the latest 2023/24 application cycle. You can try selecting different subjects on both sides and toggle the gender dimension (All / Women / Men) to view the real rejection ratios:

From the 10-year trends and the latest funnel diagrams, we can objectively extract the key admissions patterns for Oxford Physics and Cambridge Natural Sciences:

1. The 30% Shortlisting Threshold for Oxford Physics

Looking at the aggregate data for Oxford Physics, out of 4,579 applicants holding straight A*s, only 1,419 ultimately received interview invitations, representing a shortlisting rate of around 31%. This means that despite everyone possessing glittering profiles, nearly 70% of candidates are knocked out in the first round. The core criterion determining this top third is the admissions test score (which was the PAT prior to 2026). Furthermore, highly niche, interdisciplinary courses demanding both humanities and sciences proficiency, such as Oxford Physics and Philosophy, see an overall acceptance rate as low as 8.3%.

2. The "Hidden Bifurcation" in Cambridge Natural Sciences

The gender distribution for Cambridge Natural Sciences in the 2023/24 cycle appeared remarkably balanced (1,284 male applicants and 1,160 female applicants), with the acceptance rate holding steady at around 25%. However, when cross-referenced with the option-selection profiles published by the ESAT board, a profound internal division emerges: 59% of candidates choosing the Biology module are female, whereas a staggering 74% of candidates selecting the Physics module are male. This indicates that the vast majority of the massive male applicant pool in Natural Sciences is concentrated in the Physical Sciences stream. If you are applying for the physics pathway, your standalone competition pool will be packed with an exceptionally high concentration of top-tier male mathematical and physical talents.

II. Re-evaluating Scores Under a Shared Yardstick: Where is Your "Safe Zone"?

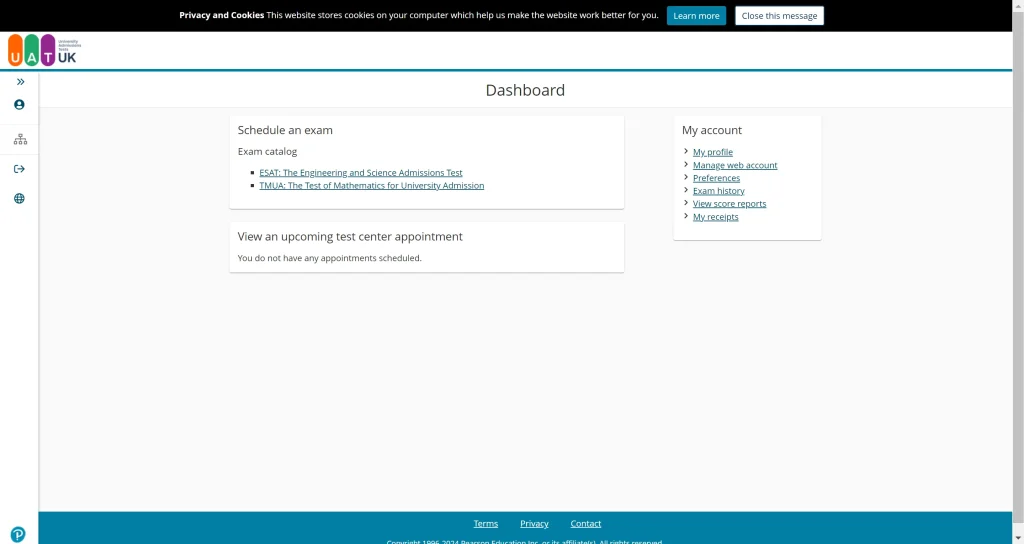

Next, let us look at how the standardised benchmark, the ESAT, impacts preparation positioning.

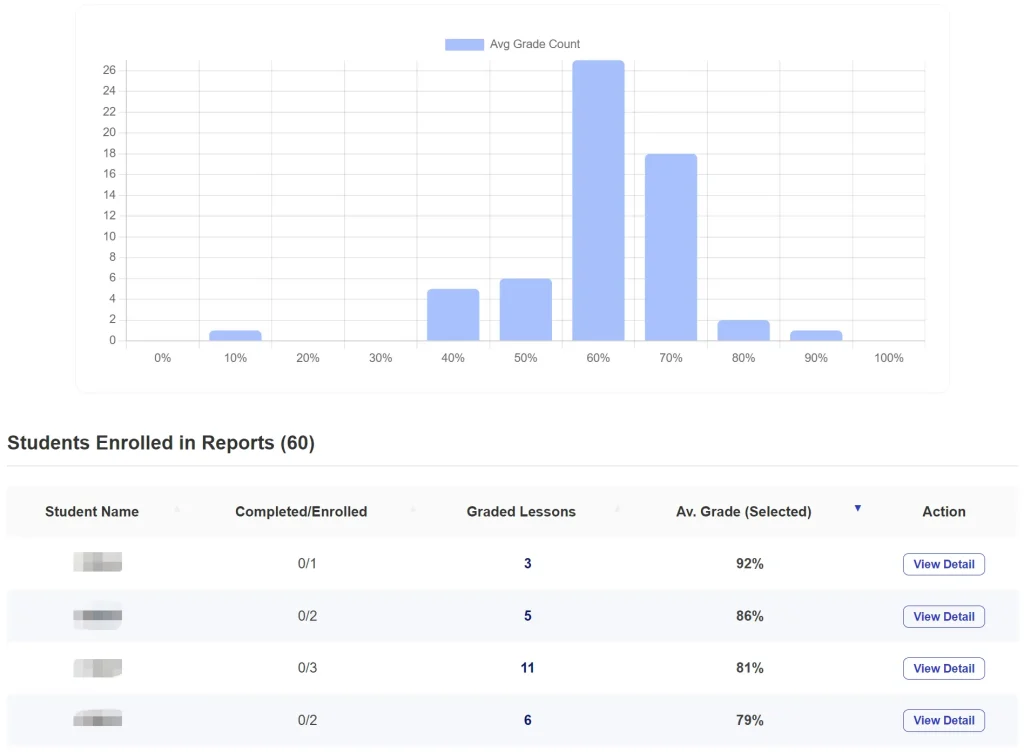

1. Global Macro-Perspective: The Average Quagmire vs Extreme Polarisation

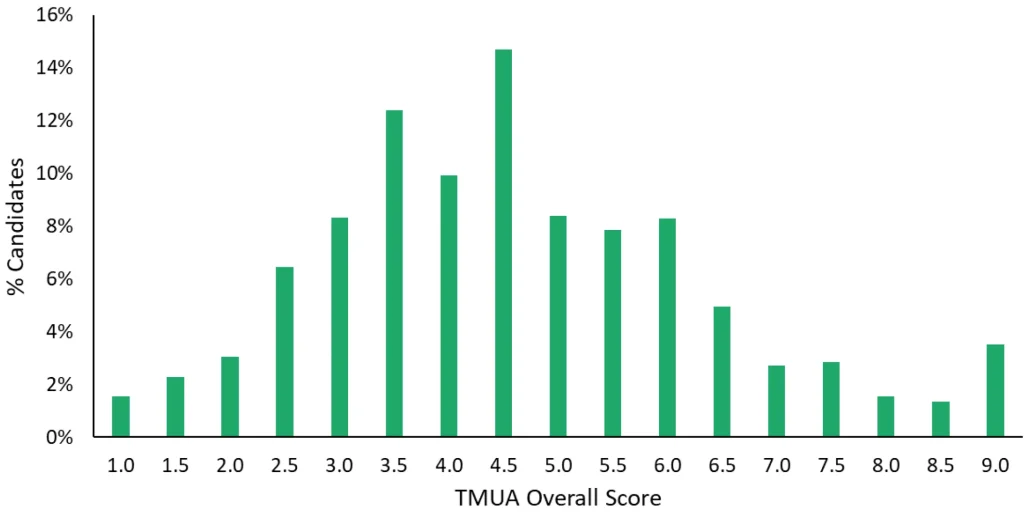

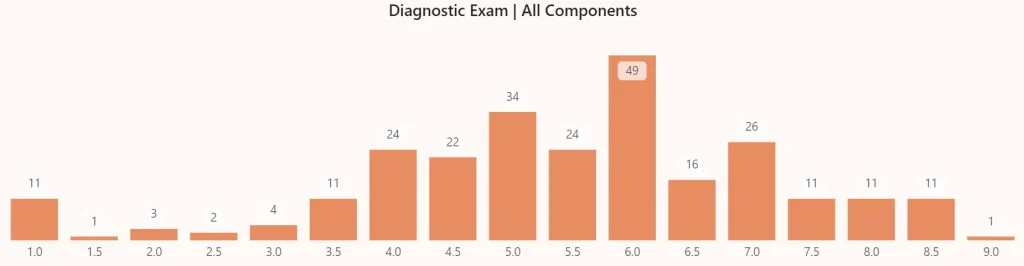

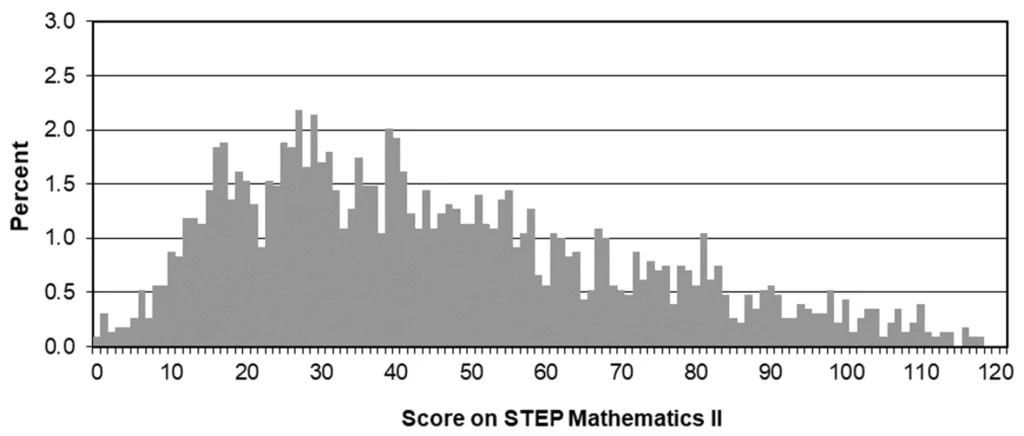

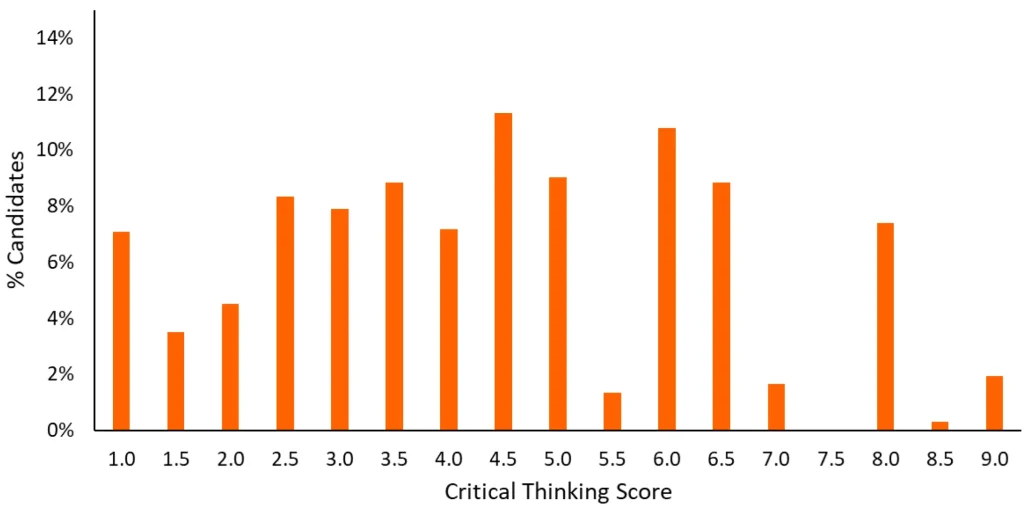

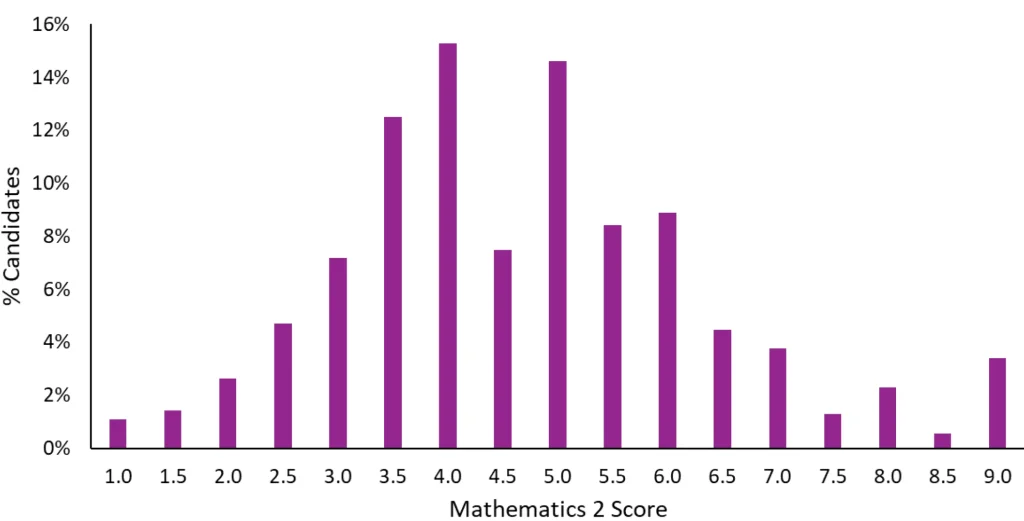

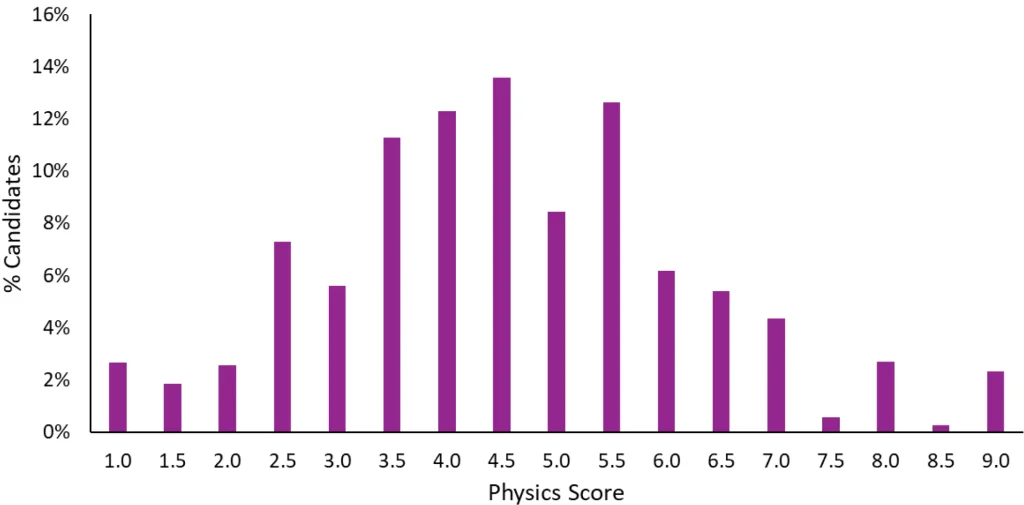

Although applicants for Oxford Physics or Cambridge Natural Sciences do not directly compete for admissions against Engineering applicants, the scores for each ESAT module are ranked together globally. According to the official UAT-UK report, out of nearly 12,000 candidates worldwide, a staggering 72% (8,564 students) opted for the exact same combination: Mathematics 1 + Mathematics 2 + Physics. Consequently, many students look to the official score distributions when benchmarking their targets, as shown below.

Global Score Distribution for ESAT Maths 1, Maths 2, and Physics — October 2025

(Screenshot from the Official UAT-UK Report)

By carefully examining these three official distribution charts, several distinct characteristics become apparent:

- Clustering in the Common Score Range

Whether in Mathematics 1, Mathematics 2, or Physics, a vast number of candidates score between 4.0 and 5.0, forming the prominent main peak of a normal distribution. - Steep Decline in the Right-Hand Long Tail

From 6.0 onwards, the proportion of candidates achieving these scores drops sharply. This indicates that scores above 6.0 begin to demonstrate strong differentiation power. - The “Uptick” at the Full Marks Range

At the extreme right edge of the chart (the 9.0 perfect score band), there is a noticeable rebound. This reveals a small, elite cohort of top-tier candidates with exceptional mathematical and physical abilities who distance themselves significantly from the general crowd.

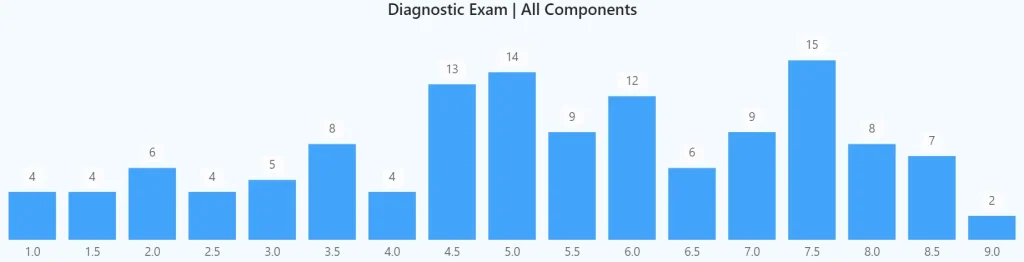

2. Re-evaluating Your Competitive Coordinates: The 8.0 Benchmark for Chinese Applicants

We have compiled the Comparison of ESAT Scores between UK and Chinese Candidates from the official UAT-UK report:

Comparison of ESAT Module Scores: Chinese vs. UK Candidates (2024/25 Application Cycle)

| Module | Country or Region | Number of Candidates | Average Score | 25th Percentile | 50th Percentile | 75th Percentile | 90th Percentile |

|---|---|---|---|---|---|---|---|

| Maths 1 | UK | 6031 | 3.93 | 3.1 | 3.9 | 4.8 | 5.6 |

| China | 2568 | 5.91 | 4.7 | 5.8 | 7.1 | 8.5 | |

| Maths 2 | UK | 4929 | 4.07 | 3.1 | 4.1 | 5.0 | 5.7 |

| China | 2197 | 5.68 | 4.5 | 5.6 | 6.8 | 8.2 | |

| Physics | UK | 4657 | 4.15 | 3.2 | 4.1 | 5.0 | 6.0 |

| China | 1961 | 5.58 | 4.5 | 5.6 | 6.8 | 8.0 |

* Source: UAT-UK Official Report

Synthesising the distribution charts and tabular data, Chinese applicants must face a hard truth: the global macro average carries very little reference value.

The ESAT is marked out of 9.0, with the global median anchored at 4.5. However, Chinese candidates perform exceptionally well; the mean score for Mathematics 1 has already reached 5.91, while the top 10% (90th percentile) threshold has been pushed up to soaring heights: 8.5 in Mathematics 1, 8.2 in Mathematics 2, and 8.0 in Physics. This cohort scoring 8.0 or even 9.0 forms the backbone of the “uptick” phenomenon on the far right of the chart.

Applicants chasing for admissions at Oxford Physics or the Physics orientaion in Cambridge Natural Sciences essentially sit at the absolute apex of this STEM track. In this fiercely competitive, independent subject pool, your admissions test scores must not only vastly exceed the global average but should ideally approach the top 10% extreme of Chinese candidates. Therefore, set your targets objectively: an 8.0 is not an impossibly high score, but rather the baseline benchmark required to secure one of those top 30% interview shortlists and subsequent admissions in Oxford Physics or Cambridge Natural Sciences disciplines.

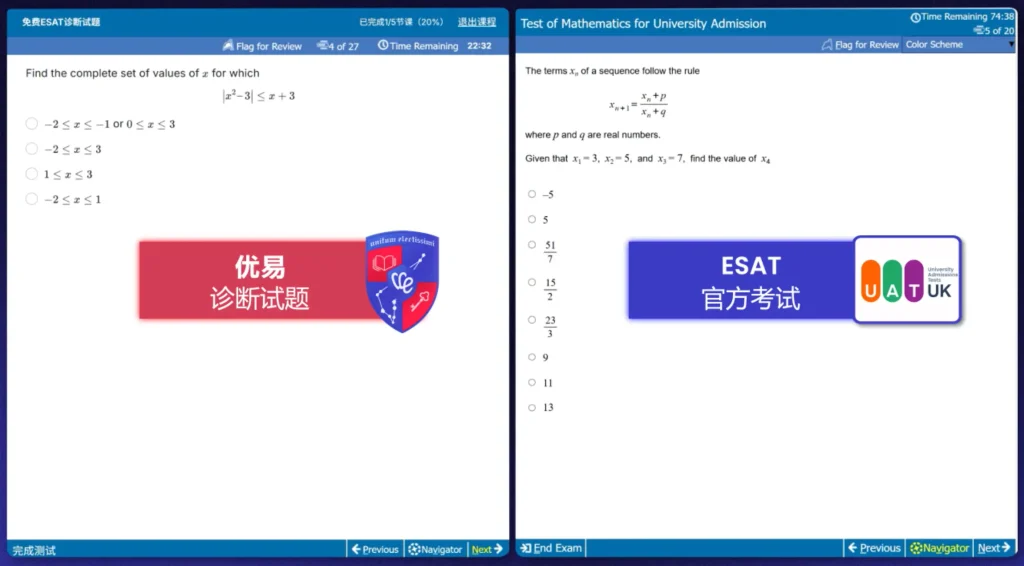

III. The Two Touchstones of the ESAT: Extreme Time Management and Pure Mathematical Intuition

Having established the score benchmarks for your specific subject pool, many candidates with extensive competition backgrounds might wonder: given that the ESAT syllabus does not exceed the high school scope, why is scoring above 8.0 still so difficult among this elite scientific cohort?

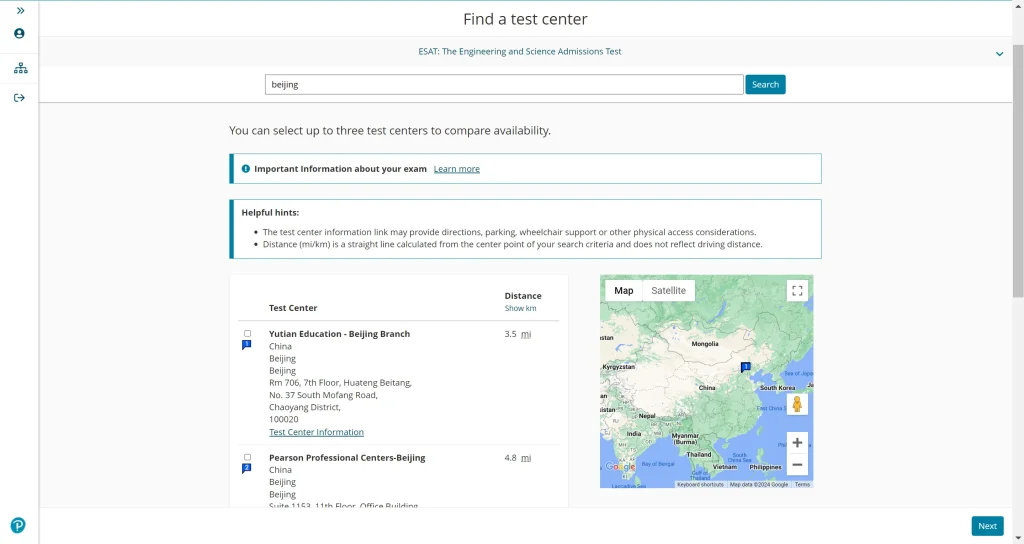

By examining two key chart datasets regarding “percent of unreached item” and “scaled score by first language” from the official UAT-UK report, we can clearly identify the two most common blind spots science students encounter when preparing for this computer-based test.

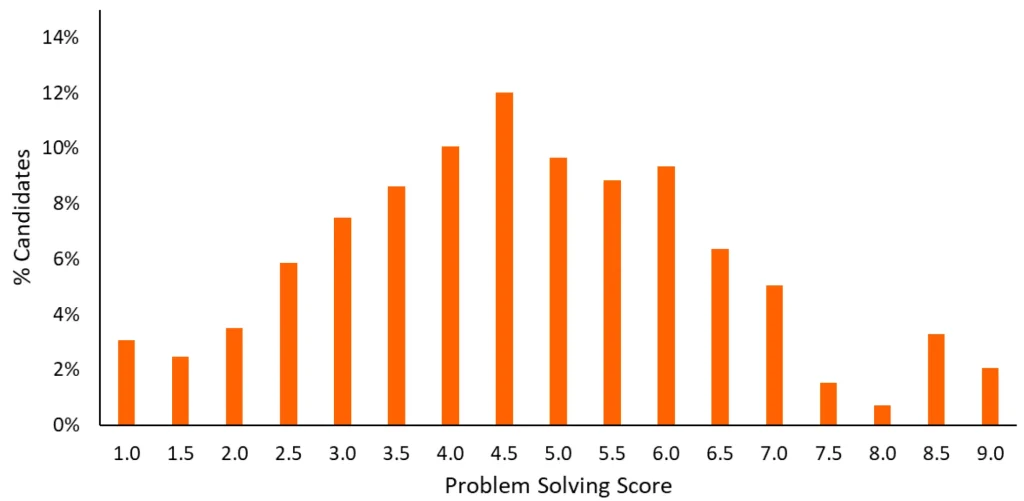

1. Extreme Time Management: The Barrier Between Academic Depth and Problem-Solving Efficiency

As a highly standardised computer-based test, the ESAT does not merely test academic depth; it is a brutal test of time management and efficiency. Each module requires candidates to tackle 27 multiple-choice questions in 40 minutes, meaning the average time allocated for reading, analysing, and answering each question is under a minute and a half (approximately 88 seconds).

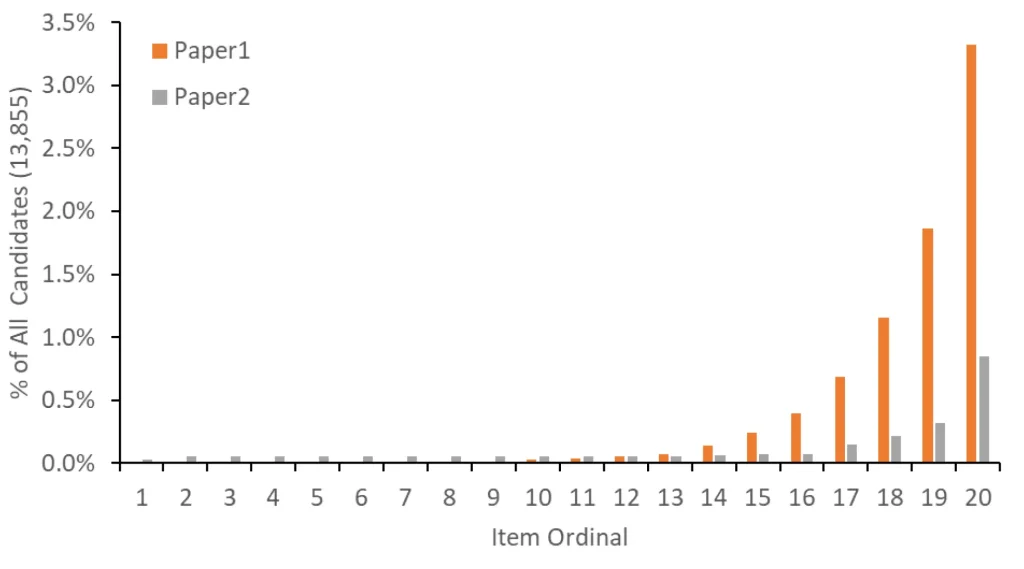

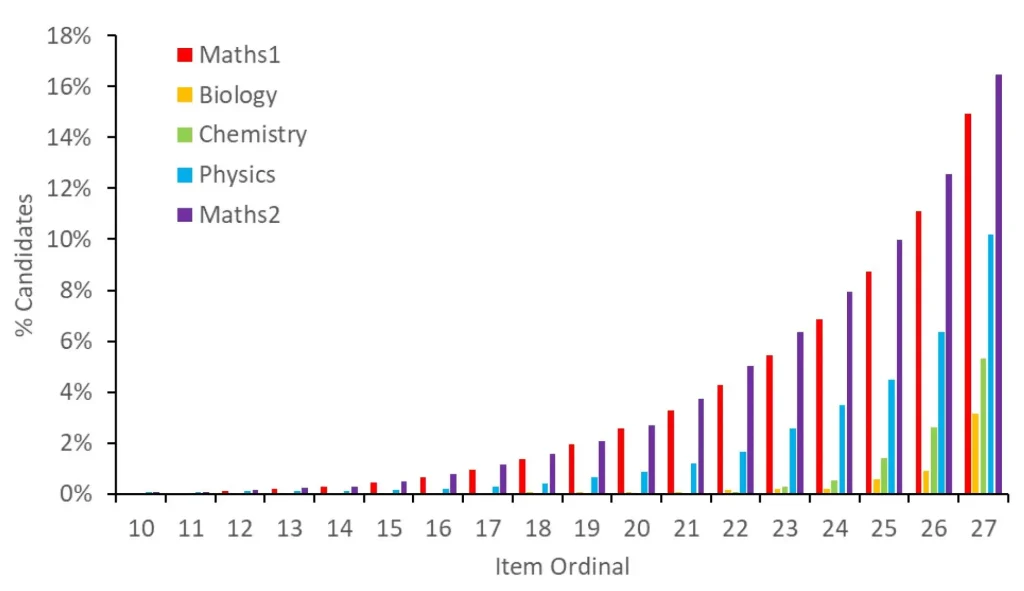

Percentage of Candidates Failing to Reach Questions by Item Ordinal Across ESAT Modules

(2024/25 Application Season)

Observing the official trend chart above, the red bars representing Mathematics 1 and the purple bars representing Mathematics 2 display an incredibly sharp upward surge after question 20. This visually captures how a vast number of outstanding candidates lost their rhythm under the pressure of intense question volume. The underlying official data further confirms this reality:

- Time Depletion in Mathematics 2

A staggering 52% (more than half) of candidates experienced “running out of time or being forced to blind-guess and submit within the final 5 seconds (Unreached/Low Time)” in this module. - The Squeeze in Mathematics 1 and Physics

In Mathematics 1, a compulsory module for all science applicants, 46% of candidates failed to comfortably finish all questions; meanwhile, the proportion of candidates facing time exhaustion in the Physics module reached 31%.

These cold figures expose an undeniable truth: having solid academic foundations does not automatically translate to a high score in a computer-based test. Many students applying for Physics or Natural Sciences are accustomed to long, deep formula derivations in high-level competitions like the BPhO or UKChO. However, when confronting the ESAT, adhering to standard, step-by-step problem-solving habits leaves them highly vulnerable to facing a wall of unread questions in the final minutes. Only by transforming deep academic strength into intuitive reflexes and decisive prioritisation under high pressure can you smoothly surmount this temporal barrier.

2. Stripping Away the Linguistic Shell: No Excuses in Pure Mathematical and Physical Duels

When hitting a wall in admissions tests, many domestic students tend to blame it on a “heavy English reading load, where long and complex sentences slowed down speed.” However, the official statistical macro-data completely eliminates this confounding variable.

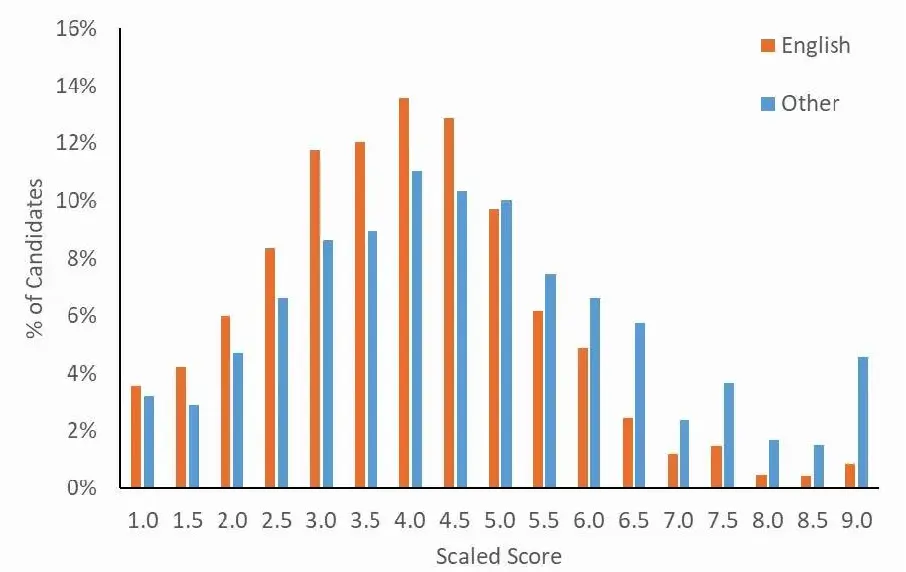

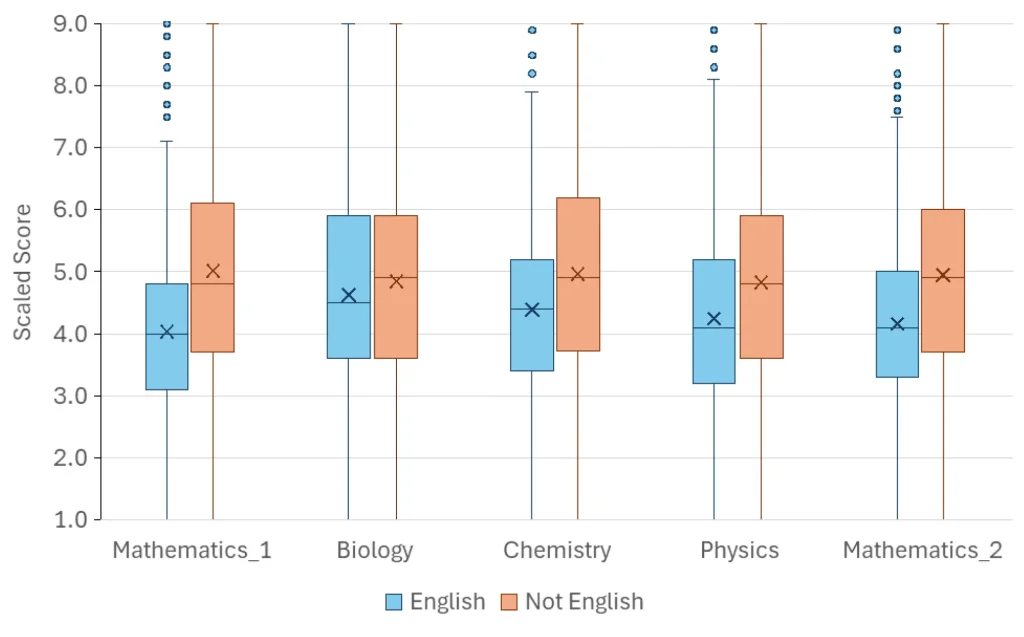

Comparison of Score Distribution by First Language: English vs Others

(Screenshot from the Official UAT-UK ESAT Report Released in September 2025)

Examining the box and whisker plot above closely, we observe a counter-intuitive phenomenon: across all five modules compared, the orange boxes (Non-English native speakers) have overall medians and interquartile ranges that are significantly higher than the light blue boxes (English native speakers). This implies that candidates whose first language is not English comprehensively outperform native British candidates on the global stage.

The official report explicitly notes that the ESAT is a typical “low language load” test.

This objective reality reminds us that in this high-level clash of scientific minds, there are no excuses to be made regarding language disadvantages. It purely and directly measures the genuine sharpness deep within a candidate’s brain regarding algebra, calculus, and mechanical frameworks. If you cannot break into the high-score tier during mock exams, you must honestly attribute it to internal factors: fundamentally, it comes down to a lack of fluency in core knowledge points or shortcomings in computational thinking.

IV. The Essence of the Academic Interview: What Kind of "Scientific Brain" is Oxbridge Seeking?

Having cleared the ~30% shortlisting line of the admissions test, successful candidates finally find themselves sitting across from Oxbridge professors.

At this stage, the focus of the interview shifts completely away from standardised “problem-solving speed” toward unearthing an individual’s deep academic potential. The official admissions guides for Oxford Physics and Cambridge Natural Sciences clearly state that the core of the interview is not about how much advanced, out-of-syllabus knowledge you have memorised, but rather your physical intuition, ability to abstract and simplify, and mathematical facility.

Aligned with the official selection rationale of both universities’ science faculties, the “ideal brain” in the eyes of professors manifests primarily in three dimensions:

1. Physical Intuition: Extracting Core Laws from Complex Phenomena

Unlike engineering interviews, which like to test practical tasks such as “designing a dam or a bridge”, interviews for Oxford Physics and Cambridge Natural Sciences admissions often dive in from a seemingly ordinary natural phenomenon or a highly abstract model.

A classic sample question released by the University of Oxford’s Department of Physics is: “If the Earth were hollow and you jumped into a hole drilled straight through the centre, what would your motion look like?” Cambridge Natural Sciences interviewers frequently ask candidates to perform a live estimation of “how much energy is required to boil the entire ocean (Fermi estimation)”.

Professors do not need you to blurt out a precise numerical answer immediately. What they are genuinely observing is your “physical intuition”—whether you can swiftly strip away irrelevant distractions from the question, accurately map this unfamiliar scenario onto fundamental laws you know well (such as Newton’s law of universal gravitation, conservation of energy, or simple harmonic motion), and formulate a minimalist initial physical model.

2. Thinking at Limits: Deconstructing Physical Changes Graphically via Mathematics

In pure science interviews, “sketching graphs” is an extreme test that almost every candidate will face. A professor might write a complex function on the whiteboard (such as $y=e^{-x}\sin x$) or ask you to plot the potential energy curve between diatomic molecules. The core of this assessment lies in your thinking at limits.

Professors want to see if, without relying on a calculator, you can keenly determine how the physical system collapses or diverges as the variable approaches zero ($x\to 0$) or infinity ($x \to \infty$). In the eyes of elite scientists, true mathematical and physical fluency means seamlessly translating calculus formulae into physical imagery in your mind.

3. Tutorial in Action: Embracing the Unknown and Thinking Aloud

The underlying essence of an Oxbridge interview is a miniature Tutorial or Supervision (the distinctive small-group teaching system unique to Oxford and Cambridge). The official guides explicitly state that the interview aims to test how you are dealing with unfamiliar concepts.

As you derive force equations or balance complex chemical reactions on the whiteboard, the professor will deliberately introduce variables you have never encountered before (for instance: “What if there is a non-uniform magnetic field in this space that varies with time?”). Under such immense pressure, getting stuck is an absolute normality for candidates—and it is precisely the starting point crafted by the professors.

At this juncture, the metric that determines success or failure is your teachability. When the professor offers a hint, can you swiftly grasp the pointer, overturn your flawed assumptions from thirty seconds prior, and “think aloud”? Candidates who do not fear making mistakes in the face of high uncertainty, maintain rigorous logic, and display excellent self-correction capabilities are exactly the research apprentices Oxbridge is most eager to recruit.

Conclusion: Measuring Your Sprinting Pace with Objective Data

In this article, we have journeyed from the ~30% shortlisting threshold for admissions at Oxford Physics and the “hidden bifurcation” in Cambridge Natural Sciences, to the baseline benchmark of 8.0+ for Chinese applicants in the global ESAT landscape, and finally to the fundamental assessment of physical intuition and thinking at limits during academic interviews.

Discerning the true metrics of this mechanism allows us to abandon mindless, repetitive drilling and instead allocate our precious time precisely where it matters most. Admissions to Oxford Physics and Cambridge Natural Sciences has never been a pure battle of physical stamina; it is a comprehensive game of scientific intuition, problem-solving efficiency, and high-pressure resilience.

Every strategy must be built upon an objective awareness of your current state. Rather than seeking a false sense of security in an endless sea of questions, it is far better to understand your own hand first and locate the true coordinates for your journey forward.

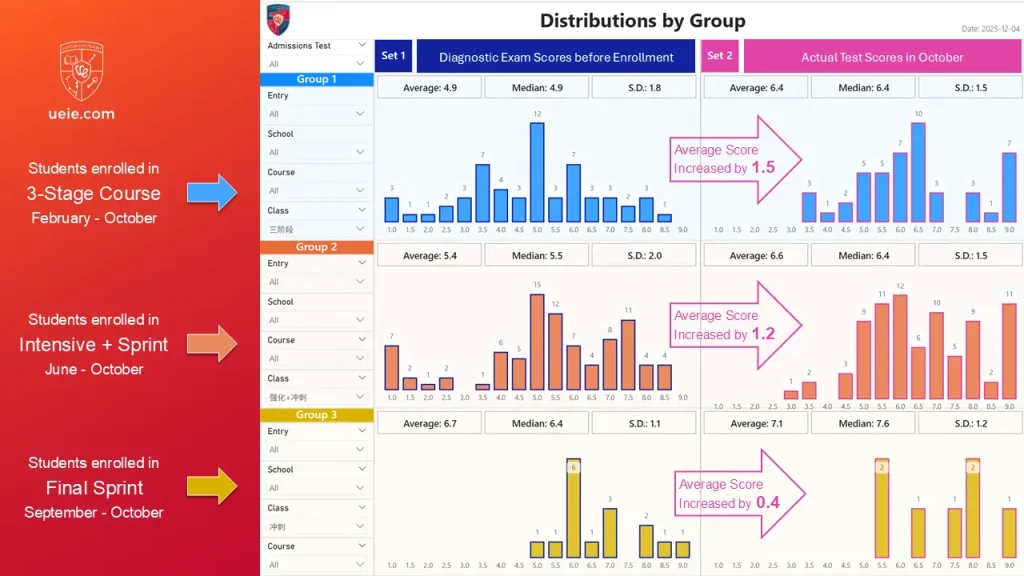

To discover how to internalise threshold-crossing knowledge into rapid problem-solving instincts under the brand-new unified exam system, and how to scientifically plan your revision schedule over the coming months, we highly recommend cross-reading this practical guide:

Oxbridge Admissions Tests Reform: Is Your Preparation Timeline on Track?

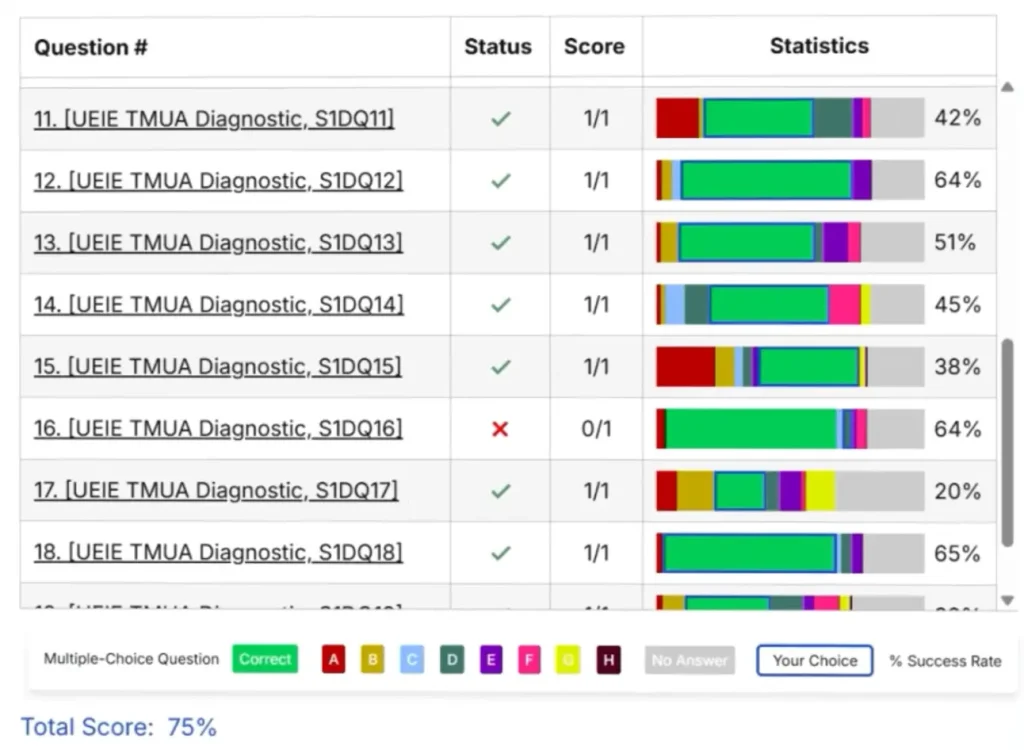

In that article, you can access highly realistic, computer-based diagnostic exams exclusively developed by UEIE teaching and research team. Use an exceptionally objective data diagnosis to pinpoint your current true combat effectiveness and take your first step toward scientific advancement.