Bidding Farewell to Mysticism; Demystifying the Admissions Selection Model for Oxford and Cambridge Mathematics

In the competitive pool for Oxford and Cambridge, double A*s in Mathematics and Further Mathematics have long since become standard admissions requirements; they fail to demonstrate any competitive advantage for the applicant. This is precisely the core pain point causing deep anxiety among top students applying to Oxford and Cambridge Mathematics, and one that few admissions counselors can thoroughly explain using data:

- Given that academic grades and personal statements are highly homogenised, what kind of sophisticated mechanism do top-tier universities use to ruthlessly and accurately eliminate 80% of those “perfect-score test takers”?

- Behind official rhetoric like “holistic assessment”, what are the actual elimination weightings for each metric?

Today, we will use official data to parse this brutal selection model of Oxford and Cambridge, revealing the true standards used in this high-stakes admissions battle for Mathematics places.

I. Hidden Thresholds: Dissecting the Admissions Requirements for Oxford, Cambridge and G5 Mathematics

When you open the official websites for Mathematics at Oxbridge or the G5, you will usually see a passing line that seems “attainable with just a bit of effort”:

- A-Level Requirements: A*A*A to A*A*A*.

- IB Requirements: A total score of 39-42, with Higher Level (HL) subjects reaching 7, 7, 6 (where Mathematics is usually mandatory at 7).

- AP Requirements: Full marks (5) in at least 5 relevant subjects, and Calculus BC must be a 5.

But if you truly set your goal only at “meeting the minimum requirements on the website”, you are already out the moment you submit your materials. In this application pool where top talents gather, three brutal “hidden barriers” exist:

Barrier 1: A lack of full marks in hardcore sciences is equivalent to an academic shortfall

No matter how euphemistic the university website’s phrasing may be (e.g., “if available”), to successfully gain admissions to the mathematics-related programs at Oxford and Cambridge, obtaining double A*s in Mathematics and Further Mathematics is the bottom line for A-Level applicants; there is no room for negotiation. Similarly, a 7 in IB Mathematics AA HL, or a 5 in AP Calculus BC combined with several other science subjects, is merely the basic configuration. Lacking full marks in these core science subjects is equivalent to exposing an academic weakness, and you will likely be eliminated directly during the system’s initial screening phase.

Barrier 2: The hidden pecking order of the third and fourth subject choices

In a landscape where double A*s or full marks are everywhere, universities have a strong preference for subject combinations. Taking the Faculty of Mathematics at Cambridge as an example, over 90% of successful applicants chose Physics as a mandatory option, and more than half paired it with Chemistry. For the AP system, full marks in hardcore sciences like Physics C (Mechanics and Electricity & Magnetism) and Computer Science A are almost standard. If you choose a relatively “soft” subject just to make up the numbers, even if you get a full score, its academic weight in the eyes of admissions officers is far lower than that of competitors holding full marks in hardcore sciences.

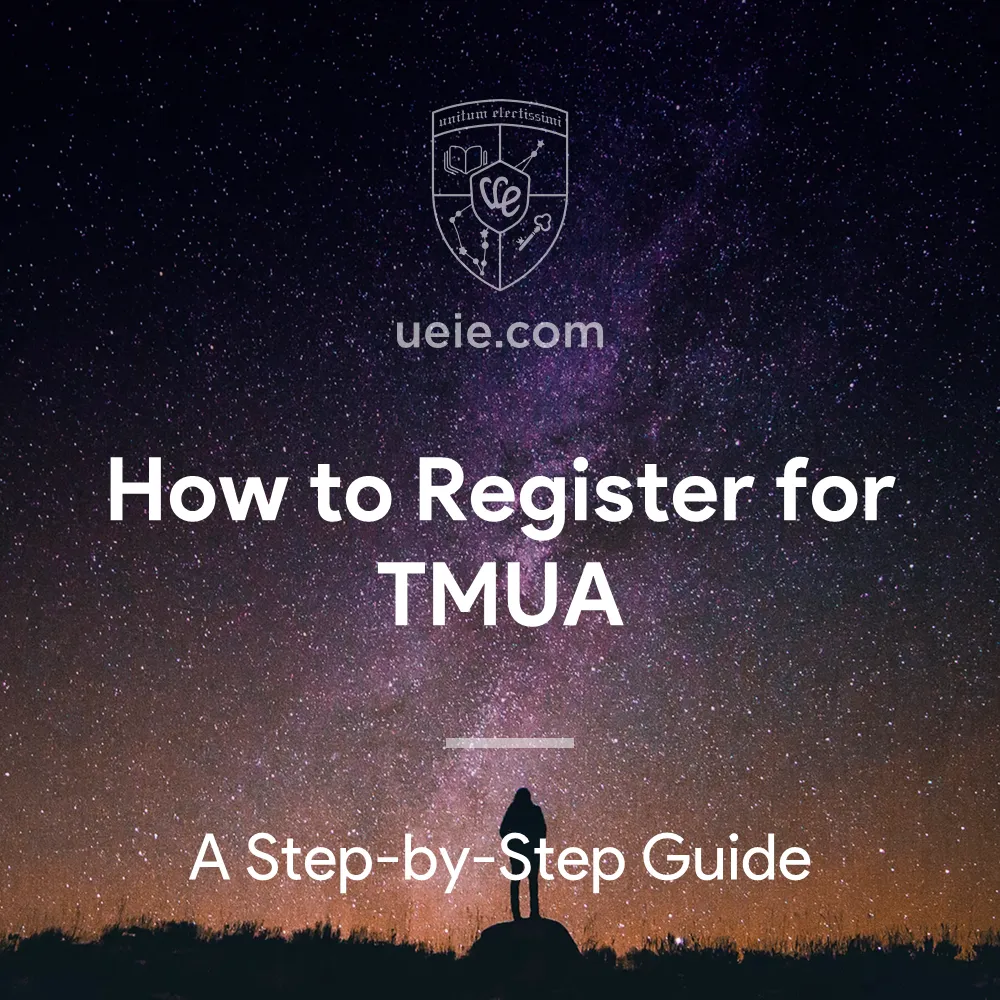

Barrier 3: TMUA has become a mandatory common baseline

According to the official entrance test requirements for the 2027 application cycle released by UAT-UK, TMUA has become an absolute threshold for Mathematics and related subjects:

| The University of Cambridge | From the 2027 application cycle onwards, TMUA scores will serve as a vital basis for issuing interview offers. This means that if you do not first break through the TMUA in October, you will not even have the qualification to sit the STEP exam after receiving a conditional offer. |

|---|---|

| The University of Oxford | Officially announced the full adoption of TMUA from the 2027 application cycle, completely replacing the previous MAT. This means candidates for Oxford Mathematics (including Statistics), Mathematics and Philosophy, and Mathematics and Computer Science will compete directly in the same admissions pool as Cambridge applicants. |

| Imperial College London | For mathematics-related subjects (including Mathematics and Computer Science), TMUA was fully adopted as a mandatory admission requirement as early as 2024, replacing the original MAT. |

| LSE | Although only Economics, Econometrics, and Mathematical Economics explicitly list TMUA as mandatory, the official wording for other math-related subjects like Financial Mathematics and Statistics is merely “recommended”. However, in an extremely competitive track, “recommended” is equivalent to “de facto mandatory”. Failing to produce highly competitive TMUA scores is tantamount to voluntarily surrendering your core competitiveness. |

II. Selection Mechanism: Distinctly Different Admissions Funnels for Oxford and Cambridge Mathematics

Faced with a vast number of top students holding straight A*s, Oxford and Cambridge follow two completely different routes in their selection mechanisms, yet they arrive at the same destination—both conduct extremely high-intensity filtering of academic ability.

Based on the latest officially disclosed admission data (2023/24 cycle), we have created the following dynamic chart “Comparison of Oxford & Cambridge Mathematics Admissions Funnels”, which allows you to gain an intuitive understanding of the admissions screening and competitive landscape for mathematics-related programs at Oxford and Cambridge.

You can experiment by selecting different majors from the dropdown menus (for instance, setting one funnel to Oxford Mathematics and the other to Cambridge Mathematics), and also toggle the gender dimension (by clicking between “All,” “Women,” and “Men”) to compare the drastic patterns of candidate attrition at various stages of the admissions process.

From a visual comparison of the aforementioned data, we can distill the underlying core admissions logic:

1. The University of Oxford: Rigorous Preliminary Screening

Taking the Oxford Mathematical Institute as an example, it received a total of 1,929 applications that year and eventually issued 200 offers. Even more sobering than this overall offer rate—which hovers around 10%—is the extremely high elimination rate at the preliminary stages: out of nearly two thousand top students, only 632 received interview offers. This means a staggering 67% of applicants were eliminated before the interview stage!

Underlying Logic

Oxford’s admission logic is crystal clear: regardless of how beautiful your grades are on paper, if your entrance test score (fully adopting TMUA from the 2027 cycle) does not reach the red line set internally by the university, professors will not give you the chance to demonstrate your academic potential in an interview.

2. The University of Cambridge: Illusion of Conditional Offers

Compared to Oxford’s rigorous preliminary screening, the admissions funnel for Cambridge Mathematics shows a different form. Among 1,588 applicants, 524 received offers; the offer rate seems to remain high at 33%. Some college counselors often cite this statistic, leading parents to the misconception that “getting into Cambridge Mathematics is easier”; however, they overlook a critical detail: among these 524 excellent students who received offers, only 258 were finally admitted. This means that over 50% of students, after receiving an offer, were ultimately—and regrettably—rejected because they could not meet the stringent STEP exam requirements stipulated in their conditional offers.

Underlying Logic

Cambridge’s original intention is to discover student potential during the interview stage as much as possible, hence their willingness to issue more conditional offers. However, what follows is a highly challenging secondary elimination—only those who successfully surmount the academic watershed of the STEP exam emerge as the true winners who have stood the test.

3. Data Perspective: Debunking the "Gender Preference" Admission Myth

In the process of guiding applications, we are often asked by parents: “Do girls have an advantage when applying for STEM subjects?”

When you switch between “Women” and “Men” data for Cambridge Mathematics in the chart, you can see an extremely brutal and realistic answer:

- Data shows that the offer rate for women is about 35.8%, which is indeed slightly higher than the 31.9% for men.

- However, when you turn your gaze to the STEEPEST DROP (the most brutal elimination stage) at the bottom of the funnel, the truth surfaces: among women who received offers, the final success rate (conversion rate) of enrollment was only 33.3%, while the success rate for men at this stage was 55.6%.

Similarly, when you turn to Oxford’s Mathematics program—or several other interdisciplinary majors—the findings are strikingly consistent: in the stages that rely heavily on admissions tests, the elimination rate for female applicants is higher than that for males.

Underlying Logic

What does this mean? Although Cambridge and Oxford employ different selection mechanisms—with Cambridge perhaps being more inclined to offer students from diverse backgrounds greater opportunities to demonstrate their potential prior to the interview stage—the grading criteria for both Cambridge’s STEP and Oxford’s MAT (the future TMUA) remain absolutely objective and applied without bias, bearing no relation whatsoever to gender. If one cannot demonstrate top-tier logical reasoning and mathematical proficiency under extreme pressure, the consequences are stark: either, like an Oxford applicant, one is barred outright from the interview stage; or, like a Cambridge applicant, one sees a previously secured conditional offer reduced to nothing more than a worthless scrap of paper.

4. Macro-Level Admissions Overview: The Elimination Mechanism is No Coincidence

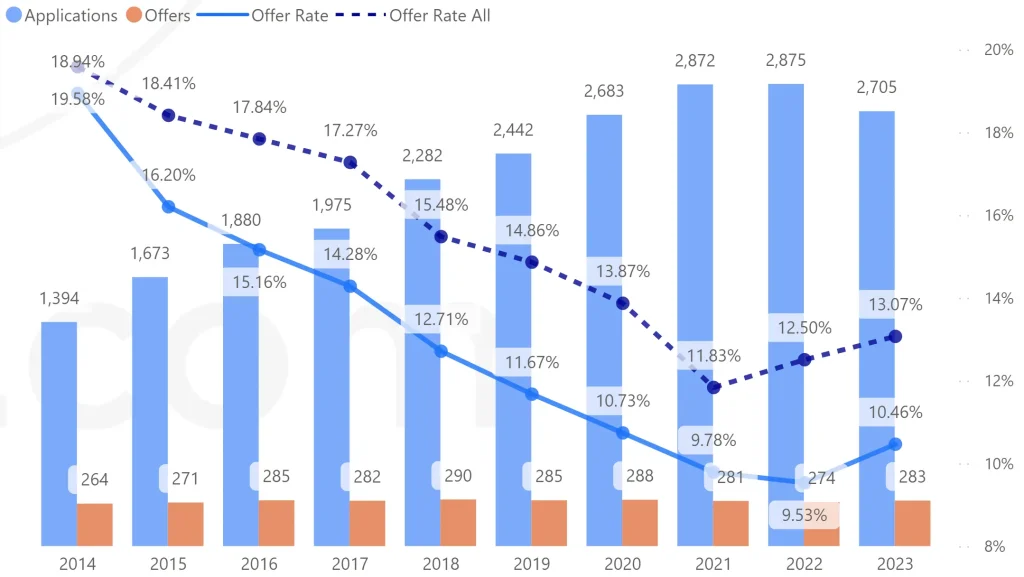

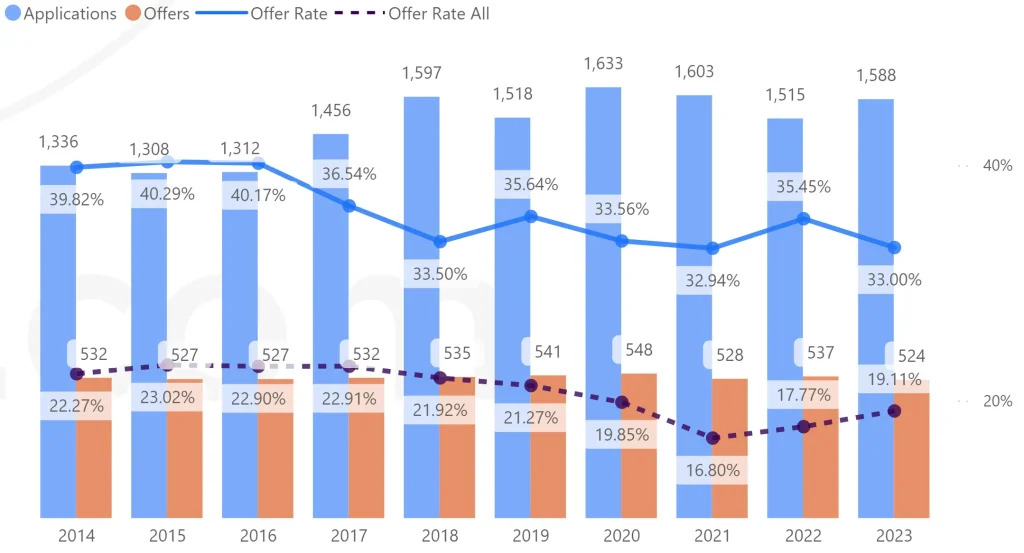

If you think the aforementioned single-year elimination rate is just an accident, you might want to look at the macro trends compiled by UEIE based on official data from the past decade (2014-2023).

Mathematics-related Admissions Data at Oxford during 2014–2023 Application Cycles

(Plotted by UEIE based on official data)

Mathematics-related Admissions Data at Cambridge during 2014–2023 Application Cycles

(Plotted by UEIE based on official data)

Data spanning the past decade clearly corroborates an irrefutable fact: in the competitive landscape of applying for Oxbridge mathematics-related programs—whether through Oxford’s heavily weighted preliminary entrance tests or Cambridge’s post-interview STEP mathematics exam—the critical hurdle that ultimately determines the outcome of an application is invariably these rigorous admissions tests.

III. Core Hurdles: The Underlying Selection Logic of TMUA and STEP

Having just witnessed the stiflingly narrow admissions funnel described earlier, many students and parents are bound to ask: “Given that virtually every applicant holds double A*s in Mathematics and Further Mathematics, where exactly did the 67% whom Oxford screened out before the interview stage—as well as the 50% whom Cambridge rejected based on their STEP results—fall short?”

The answer lies hidden within the TMUA and STEP examinations. By combining the annual TMUA report published by UAT-UK with the 2024 STEP results report released by the University of Cambridge, we gain insight into the three-tiered screening logic employed by Oxford and Cambridge Mathematics in the admissions tests:

1. A Severely Stretched Yardstick and a Battlefield of Titans

A* rates in A-Levels are inflating year by year, having long since lost the ability to distinguish top students. Whether it is the pre-test TMUA or the post-offer STEP, their core mission is singular: to perform extreme stretching at the full-score range.

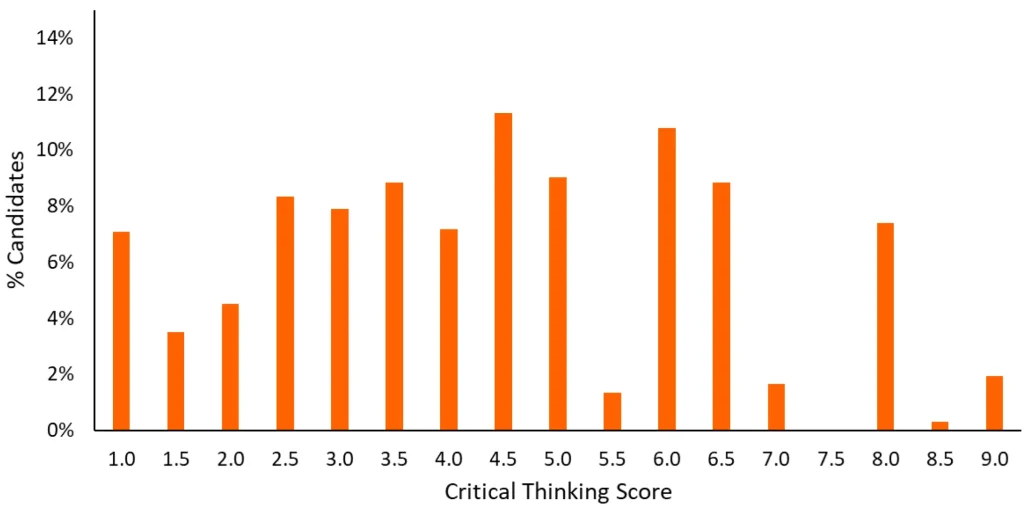

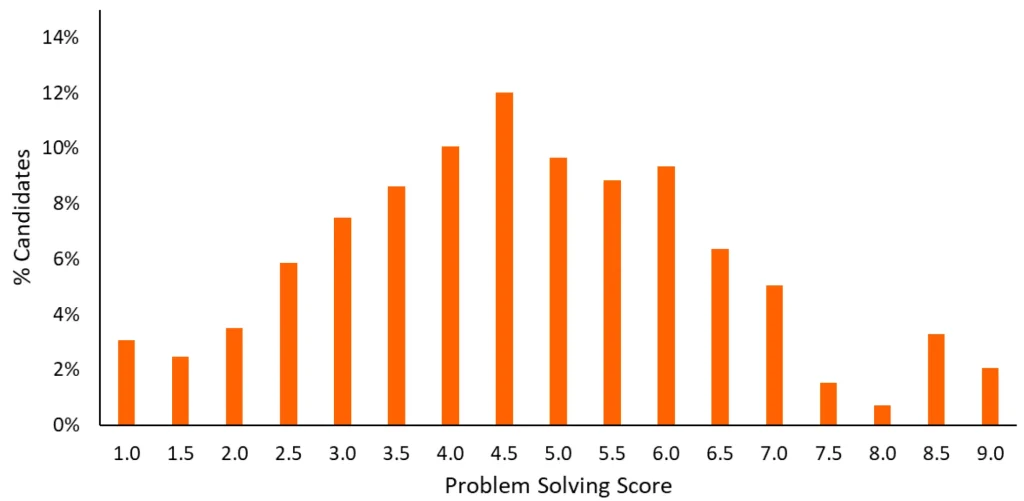

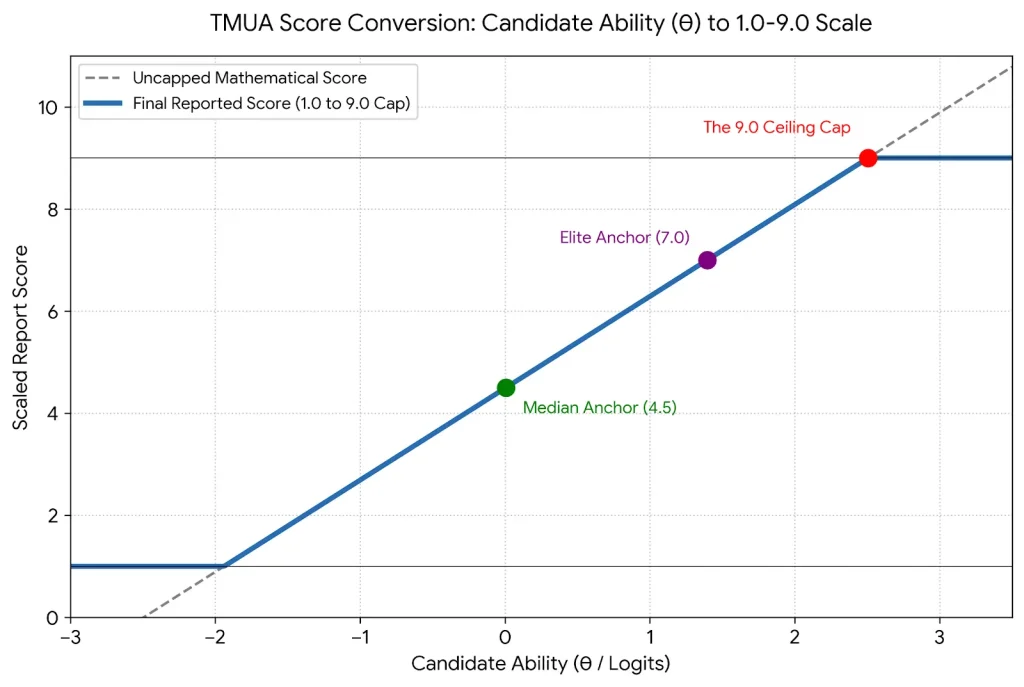

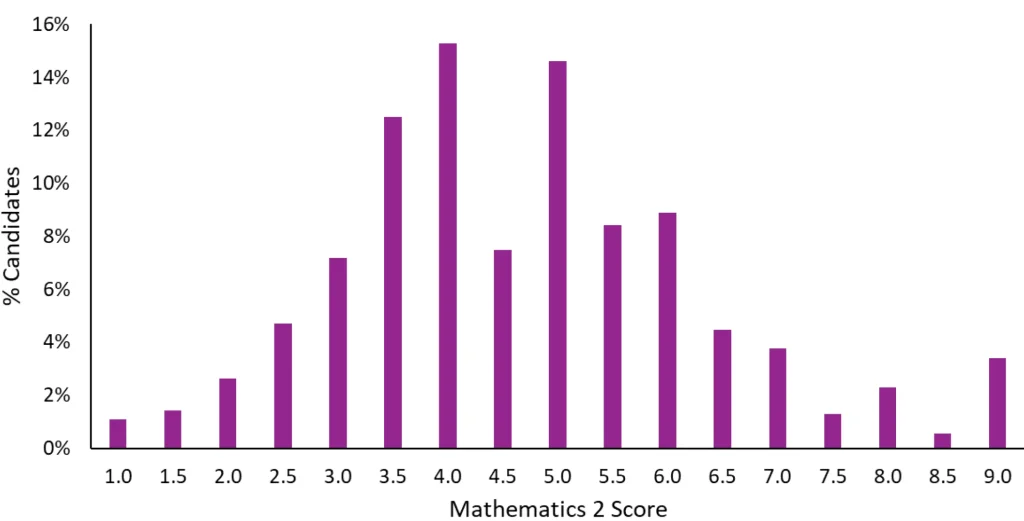

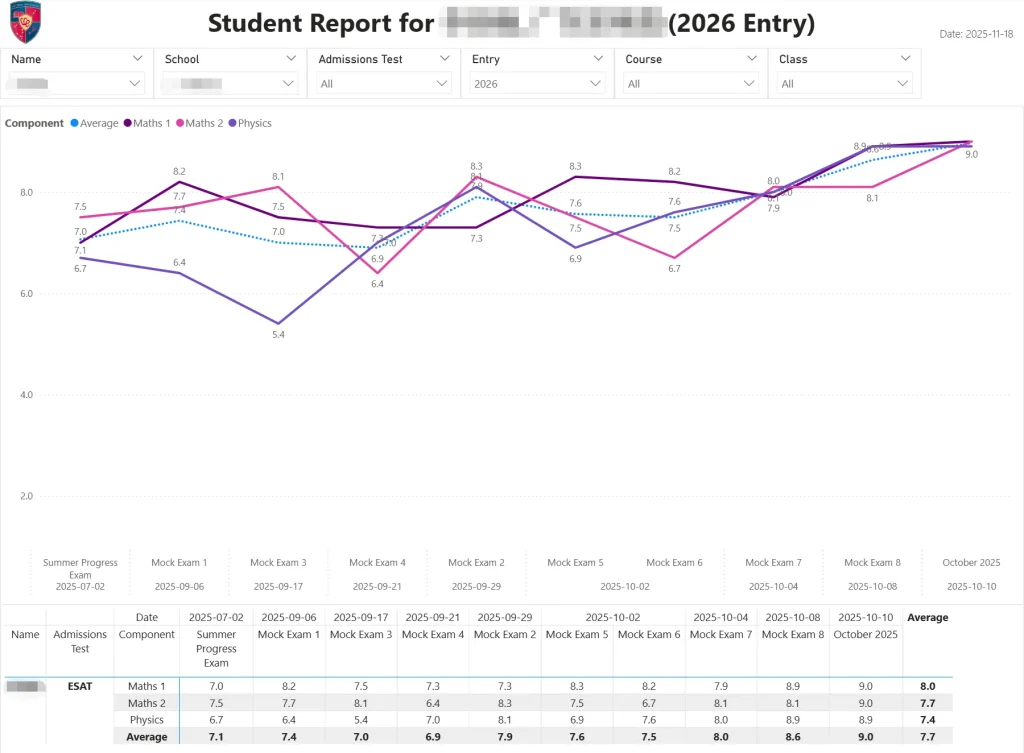

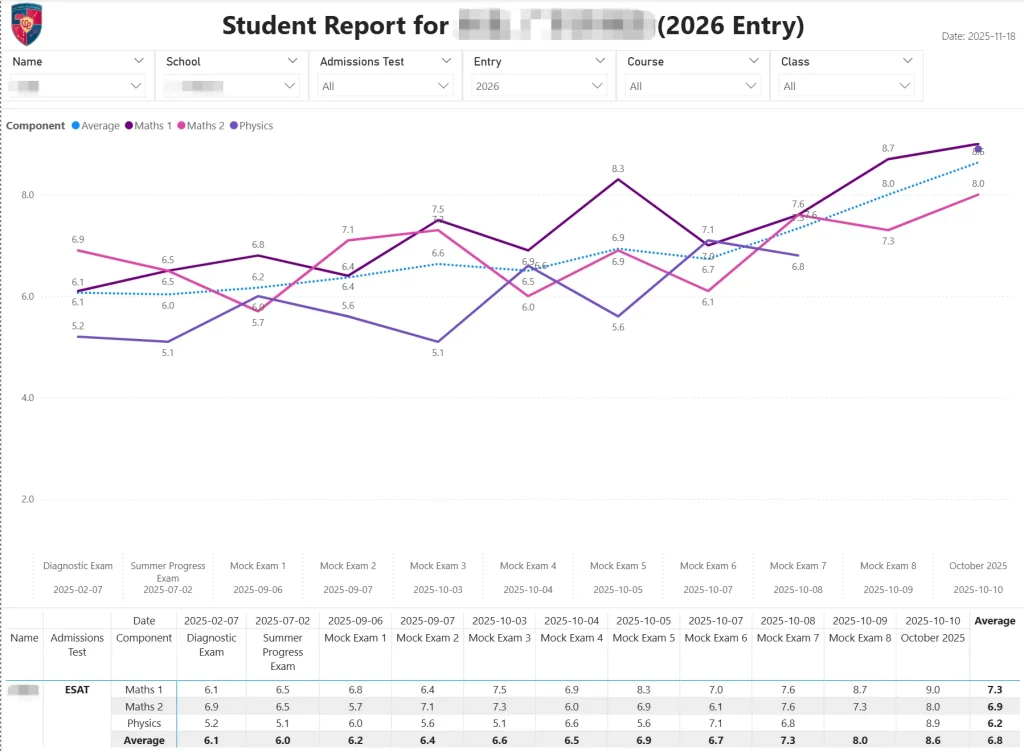

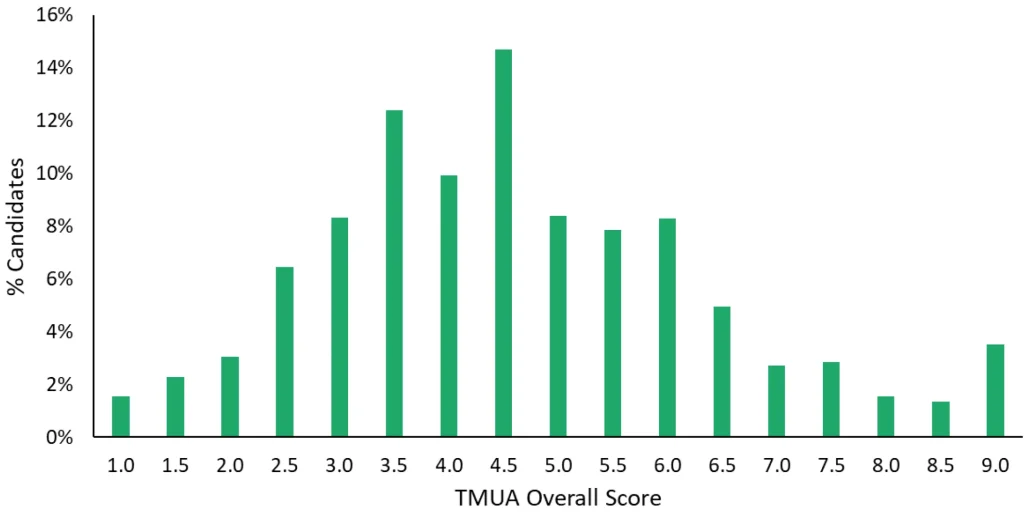

Extreme Competition of the TMUA

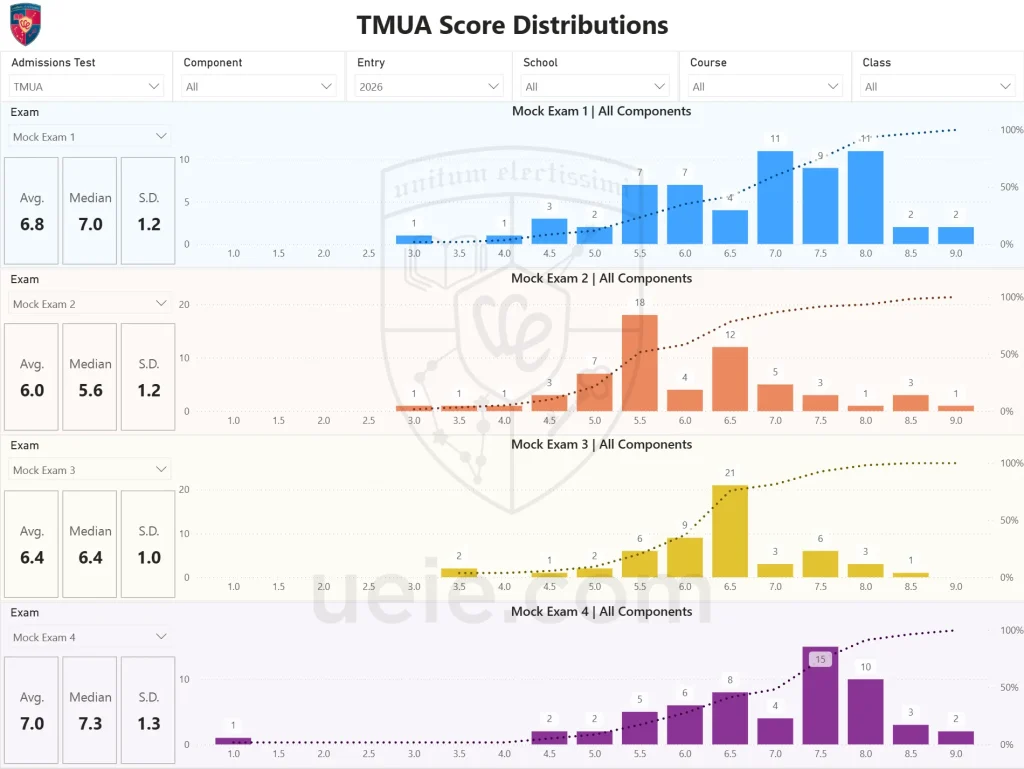

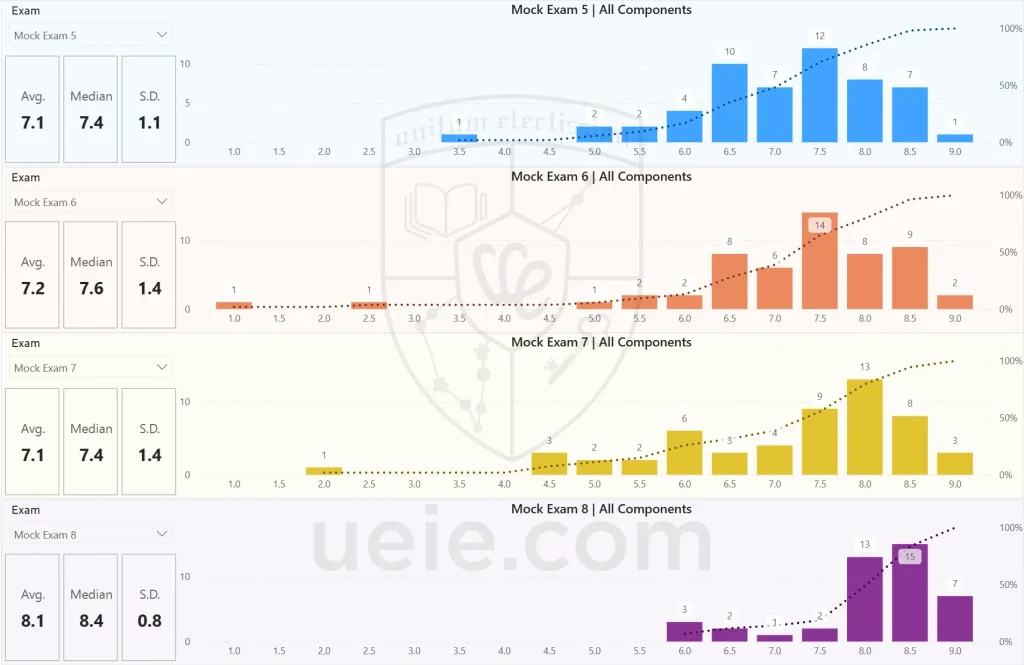

On the TMUA—which carries a maximum score of 9.0—the global average score among nearly 14,000 candidates was a mere 4.20. Notably, the average score for candidates from China stood at an impressive 5.42, far surpassing the 3.86 average achieved by candidates within the UK. Even more stark is the fact that the 90th percentile for Chinese candidates sits at 8.4 points, whereas UK-based candidates need only score 5.8 points to secure a spot in the top 10%. For high-achieving students applying for mathematics programs, their inherent advantage on the TMUA is further amplified, thereby intensifying this already fierce competition.

TMUA Global Score Distribution – October 2025

(Screenshot from Official UAT-UK Report)

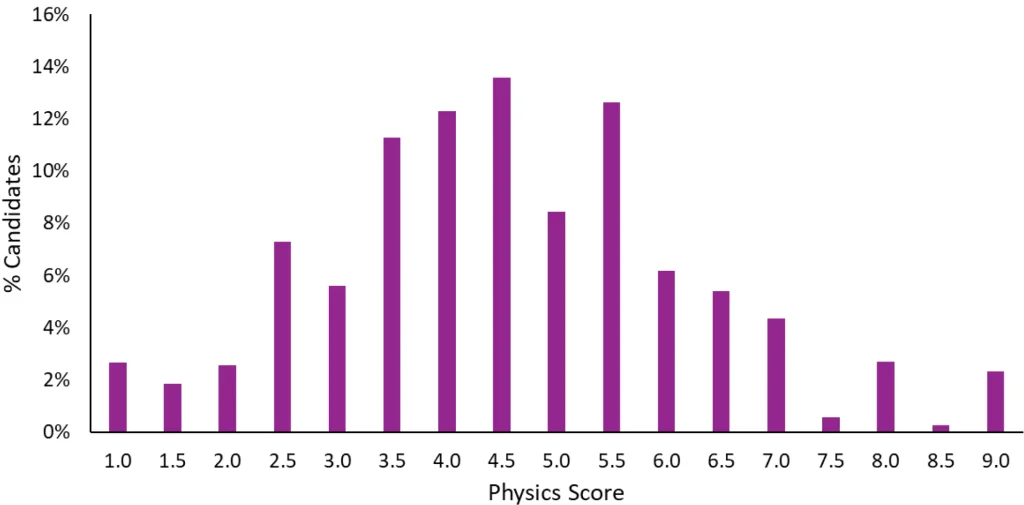

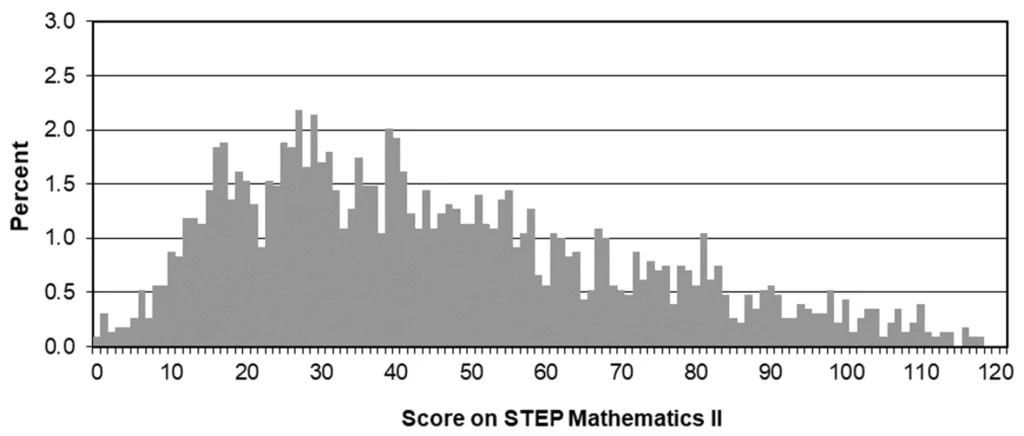

80/20 Rule of the STEP

The Cambridge Mathematics Department typically requires successful applicants to achieve a Grade 1 (Excellent) in both STEP 2 and STEP 3. However, official statistics reveal that in the 2024 STEP 2 examination, only 19.85% of all candidates managed to meet the Grade 1 threshold. This explains why the Cambridge Mathematics Department feels confident in issuing offers so generously—because they know full well that as many as 80% of candidates will simply be unable to bridge this formidable academic chasm.

2024 STEP Global Grade Distribution

(Screenshot from Official Report)

2. Overwhelming Pressure: A Dual Test from "Instinctive Reaction" to "Extreme Endurance"

The mathematics-related programs at Oxford and Cambridge do not just use admissions tests to filter applicants; the dimensions of screening are highly complementary, completely blocking the traditional high school “brute-force difficult problems” and “sea-of-questions tactics.”

TMUA: Instant Processing of Massive Information

It places an extreme test on one’s capacity to process vast quantities of information instantaneously, assessing not merely whether a candidate “can solve the problem,” but—more critically—whether they can “solve it instantly while under extreme, high-pressure conditions.” Official statistics reveal that as many as 23% of candidates spend no more than 10 seconds on at least one question. This indicates that nearly a quarter of the test-takers completely buckle under the weight of certain questions, leaving them with no choice but to resort to blind guessing before submitting their papers. What the TMUA seeks to identify, precisely, are those academic elites who—even when subjected to extreme pressure—can still rely on ingrained “muscle memory” and execute rapid, logical reasoning.

Comprehensive TMUA Guide

STEP: Profound Academic Foundation

Unlike the fast-paced and concise nature of the TMUA, STEP assesses an exceptionally deep level of academic proficiency. Each paper comprises numerous substantial problems; however, only the six questions with the highest scores are ultimately counted toward the final grade. The exam structure allows candidates to devote thirty minutes—or even longer—to contemplating a single problem; yet, the official marking scheme explicitly states that as long as a candidate demonstrates “good progress towards a solution” in their approach, they will be generously awarded method marks, even if they do not arrive at the correct final answer. What this examination seeks to identify are those possessing a truly mathematical mind—individuals capable of maintaining composure when navigating uncharted territory and demonstrating the sustained intellectual endurance required for rigorous logical deduction.

Cambridge STEP Demystified: All Aspects Covered

3. Stripping the Language Semblance: Directly Hitting the Core of Pure Mathematical Logic

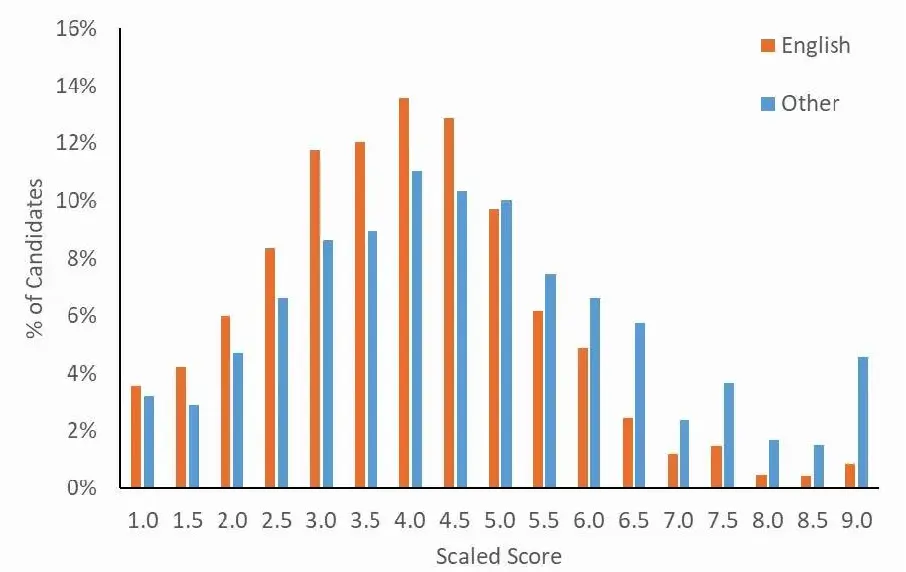

Many international students, after failing their admissions tests, tend to attribute their failure to the excuse that “the questions contained too many long, complex English sentences that they couldn’t understand.” However, official data ruthlessly shatters this form of self-consolation.

Comparison of Score Distributions by First Language: English vs. Other

(Screenshot from Official UAT-UK TMUA Techical Report, published in September 2025)

Counter-intuitive Data

According to the TMUA report, candidates whose first language is not English (average score 4.61) performed significantly better than native English speakers (average score 3.94).

Underlying Logic

The official report explicitly states that the “Language Load” of these unified mathematics tests is extremely low. This means that in this battle, there are no excuses for “language disadvantage.” It strips away all superficial trappings, directly measuring the candidate’s true acuity—deep within the brain—for mathematical intuition and logical proof.

IV. The Ultimate Touchstone: What Kind of Brain is Oxbridge Looking For?

Having successfully cleared the critical hurdle of the admissions tests, candidates proceed to the interview stage—the decisive phase that determines their ultimate placement. At this juncture, the assessment criteria employed by Oxford and Cambridge prove to be remarkably consistent. A review of the official statements from the Mathematics departments at both Oxford and Cambridge reveals three distinct core attributes central to the admissions selection process at these prestigious institutions—precisely the qualities that the interviews are designed to rigorously evaluate:

1. The Official Perspective: Not Merely Knowledge, but "Intellectual Flexibility"

Cambridge officials state that they value not only a solid foundation in mathematics but also mathematical ability—namely, the creativity to build connections between different concepts and the flexibility to quickly understand new concepts and use them to solve challenging problems.

The admissions criteria for the Department of Mathematics at the University of Oxford mirror those of Cambridge exactly: not only do they require applicants to be able to construct “clear and concise mathematical arguments,” but during the interview stage, professors place even greater emphasis on a candidate’s ability to “assimilate new ideas or apply existing knowledge to challenging new contexts.”

What Oxford and Cambridge truly value is not how many formulas beyond the standard curriculum you have memorized in advance, but rather whether you possess a foundational core of mathematical thinking—a mental framework that can be continuously guided and expanded upon.

2. The Essence of Interviews: Rehearsal for an Academic Guidance Session Under High Pressure

To assess this very “flexibility,” Oxbridge interviews are by no means mere casual chats or personality tests; rather, they serve as high-intensity simulations of a one-on-one academic tutorial (or “supervision”).

Professors will deliberately pose unfamiliar, challenging problems that extend far beyond the scope of the high school curriculum—sometimes even engaging in rigorous academic derivations right there on a whiteboard. Their objective is not to see whether you can instantly solve the problem, but rather to observe what happens when you get stuck. When you find yourself completely stumped, the professor will offer a hint. At this juncture, the true litmus test emerges: Do you possess “teachability”? Can you quickly grasp the professor’s guidance, maintain your composure, and continue to navigate forward along an uncharted chain of logic? This capacity to resonate—to find a shared wavelength—with world-class scholars while navigating through uncharted intellectual waters is the very core of successfully clearing an academic interview.

3. Warmth Behind Cold Data: Why are One-Third of Applicants Admitted by Exceptions?

While the selection process at Oxford and Cambridge is undoubtedly rigorous, it is by no means a cold, calculating machine concerned solely with numerical scores. The Department of Mathematics at Cambridge has released a set of highly revealing admissions statistics:

“Although STEP serves as a crucial benchmark for issuing conditional offers…… in reality, only about two-thirds of the students ultimately admitted actually met the required STEP grade thresholds. For the remaining one-third of the places, the colleges undertake a comprehensive re-evaluation of the complete application materials—including the actual STEP examination scripts—submitted by those candidates who fell short of the standard.”

This highlights the core value underpinning admissions for Mathematics programs at Oxford and Cambridge: holistic assessment. A machine can perceive only the final outcome, whereas a professor can discern the underlying process. If, during your academic interview, you demonstrate unparalleled motivation and exceptional intellectual potential—even if you fall just shy of the required grade in the final STEP examination—the university remains willing to open its doors to you, provided your exam scripts reveal a truly impressive display of logical deduction.

V. Conclusion: Clarifying Your Position—Every Strategy Requires Time to Take Root

In this article, we have stripped away the pleasantries found on official websites—moving from admissions funnel data and the rigorous grading of computer-based tests to the ultimate interrogation of the academic interview—to reveal the unvarnished truth regarding the actual admissions thresholds for Mathematics programs at Oxford and Cambridge.

However, for the individual, all macro-level admission probabilities and official selection logic ultimately boil down to just two outcomes: 0 or 1. Once we clearly grasp these ruthless rules, we come to realize a fundamental reality:

Applying to Oxford or Cambridge is never a battle that can be won through last-minute cramming.

Given that the scores of admissions tests just serve as the primary gatekeeper, and that the underlying mathematical mindset and resilience tested during interviews are certainly not cultivated overnight, the one thing you absolutely cannot afford to squander in this admissions cycle—where competition has reached an all-time high—is these precious few months spent on blind trial and error.

Every strategy must be built upon an objective assessment of your own true capabilities. Rather than lingering in the anxiety of a “clash of titans,” you are better served by first taking stock of your own hand.

For guidance on how to internalize the skills needed to clear these thresholds—specifically within the context of the brand-new admissions tests system—and how to scientifically structure your study schedule for the coming months, we strongly recommend reading this practical guide in conjunction with this article:

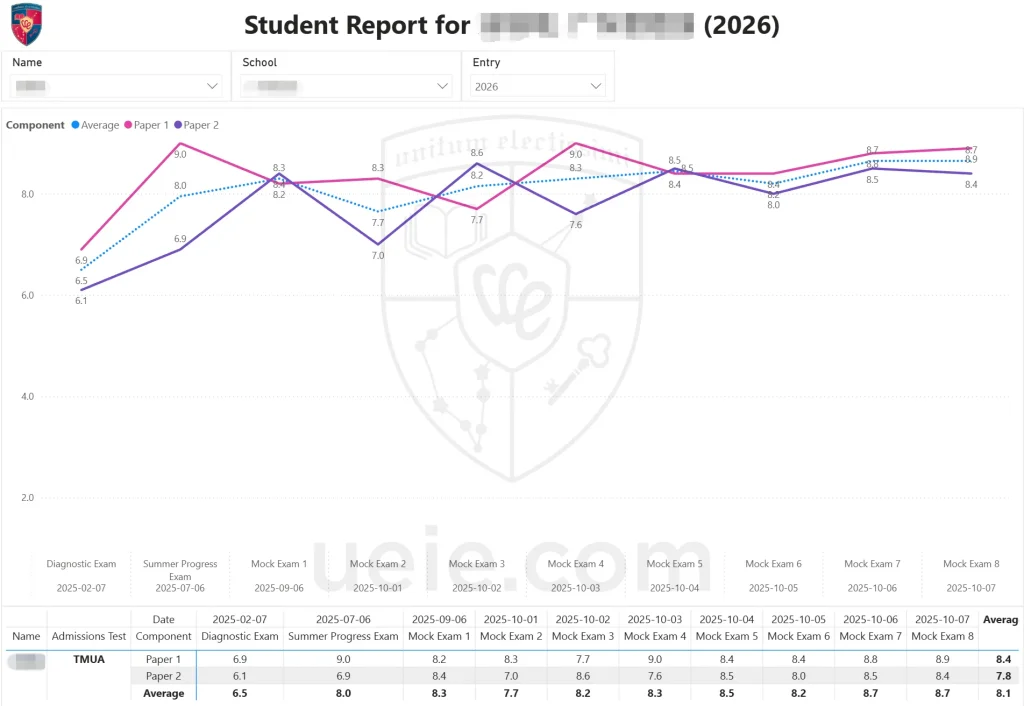

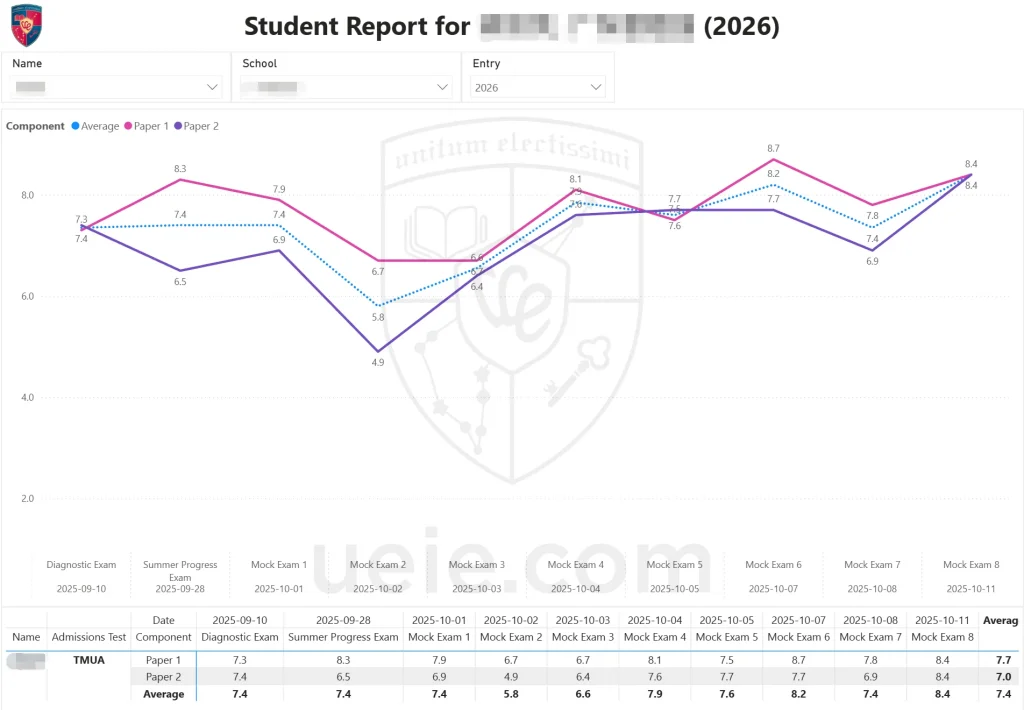

Oxbridge Admissions Tests Reform: Is Your Prep Timeline on Track?

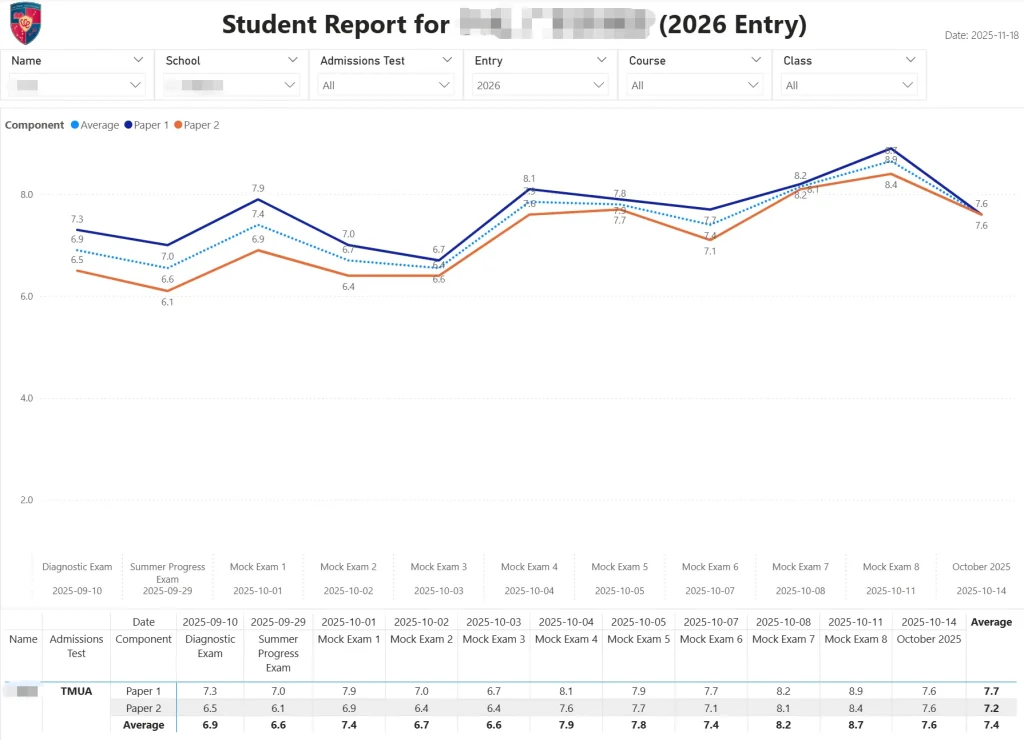

In that article, you can access a set of highly realistic diagnostic exams—exclusively developed by the UEIE Education & Research Team—designed to simulate the actual computer-based admissions tests. Use this objective, data-driven diagnostic assessment to pinpoint your current proficiency level and take the crucial first step toward a scientifically guided path of academic advancement.